Introduction

About three years ago, the LLVM framework started to pique my interest for a lot of different reasons. This collection of industrial strength compiler technology, as Latner said in 2008, was designed in a very modular way. It also looked like it had a lot of interesting features that could be used in a lot of (different) domains: code-optimization (think deobfuscation), (architecture independent) code obfuscation, static code instrumentation (think sanitizers), static analysis, for runtime software exploitation mitigations (think cfi, safestack), power a fuzzing framework (think libFuzzer), ..you name it.

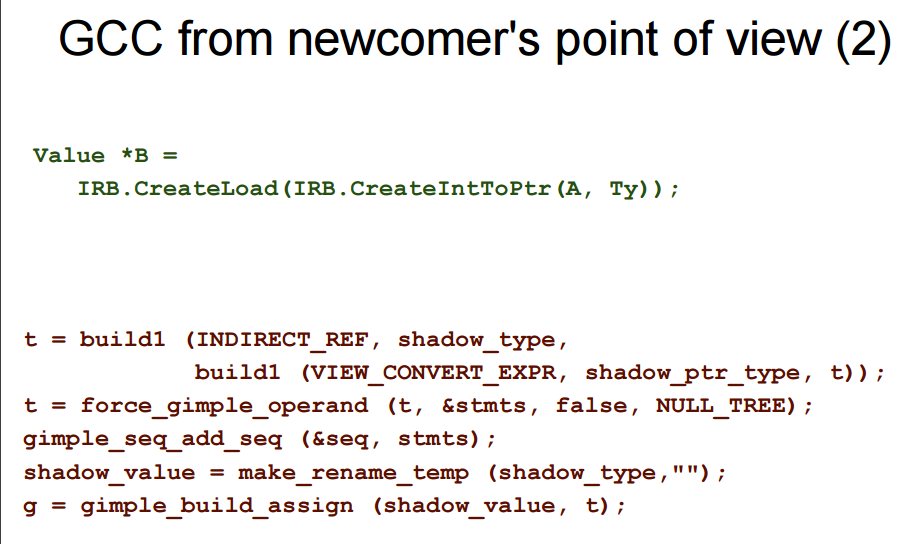

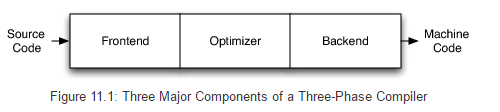

A lot of the power that came with this giant library was partly because it would operate in mainly three stages, and you were free to hook your code in any of those: front-end, mid-end, back-end. Other strengths included: the high number of back-ends, the documentation, the C/C++ APIs, the community, ease of use compared to gcc (see below from kcc's presentation), etc.

Background

Source of inspiration

If you haven't heard of the new lcamtuf's coverage-guided fuzzer, it's most likely because you have lived in a cave for the past year or two as it has been basically mentioned everywhere (now on this blog too!). The sources, the documentation and the afl-users group are really awesome resources if you'd like to know a little bit more and follow its development.

What you have to know for this post though, is that the fuzzer generates test cases and will pick and keep the interesting ones based on the code-coverage that they will exercise. You end-up with a set of test cases covering different part of the code, and can spend more time hammering and mutating a small number of files, instead of a zillion. It is also packed with clever hacks that just makes it one of the most used/easy fuzzer to use today (don't ask me for proof to back this claim).

In order to measure the code-coverage, the first version of AFL would hook in the compiler toolchain and instrument basic block in the .S files generated by gcc. The instrumentation flips a bit in a bitmap as a sign of "I've executed this part of the code". This tiny per-block static instrumentation (as opposed to DBI based ones) makes it hella fast, and can actually be used while fuzzing without too much of overheard. After a little bit of time, an LLVM based version has been designed (by László Szekeres and lcamtuf) in order to be less hacky, architecture independent (bonus that you get for free when writing a pass), and very elegant (no more reading/modifying raw .S files). The way this has been implemented is hooking into the mid-end in order to statically add the extra instrumentation afl-fuzz needs to have the code-coverage feedback. This is now known as afl-clang-fast.

A little later, some discussions on the googlegroup led the readers to believe that knowing "magics" used by a library would make the fuzzing more efficient. If I know all the magics and have a way to detect where they are located in a test-case, then I can use them instead of bit-flipping and hope it would lead to "better" fuzzing. This list of "magics" is called a dictionary. And what I just called "magics" are "tokens". You can provide such a dictionary (list of tokens) to afl via the -X option. In order to ease, automate the process of semi-automatically generate a dictionary file, lcamtuf developed a runtime solution based on LD_PRELOAD and instrumenting calls to memory compare like routines: strcmp, memcmp, etc. If one of the argument comes from a read-only section, then it is most likely a token and it is most likely a good candidate for the dictionary. This is called afl-tokencap.

afl-llvm-tokencap

What if instead of relying on a runtime solution that requires you to:

- Have built a complete enough corpus to exercise the code that will expose the tokens,

- Recompile your target with a set of extra options that tell your compiler to not use the built-ins version of

strcmp/strncmp/etc, - Run every test cases through the new binary with the libtokencap

LD_PRELOAD'd.

..we build the dictionary at compile time. The idea behind this, is to have another pass hooking the build process, is looking for tokens at compile time and is building a dictionary ready to use for your first fuzz run. Thanks to LLVM this can be written with less than 400 lines of code. It is also easy to read, easy to write and is architecture independent as it is even running before the back-end.

This is the problem that I will walk you through in this post, AKA yet-another-example-of-llvm-pass. Here we are anyway, an occasion to get back at blogging one might even say!

Before diving in, here what we actually want the pass to do:

- Walk through every instructions compiled, find all the function calls,

- When the function call target is one of the function of interest (

strcmp,memcmp, etc), we extract the arguments, - If one of the arguments is an hard-coded string, then we save it as a token in the dictionary being built at compile time.

afl-llvm-tokencap-pass.so.cc

In case you are already very familiar with LLVM and its pass mechanism, here is afl-llvm-tokencap-pass.so.cc and the afl.patch - it is about 300 lines of C++ and is pretty straightforward to understand.

Now, for all the others that would like a walk-through the source code let's do it.

AFLTokenCap class

The most important part of this file is the AFLTokenCap class which is walking through the LLVM IL instructions looking for tokens. LLVM gives you the possibility to work at different granularity levels when writing a pass (more granular to the less granular): BasicBlockPass, FunctionPass, ModulePass, etc. Note that those are not the only ones, there are quite a few others that work slightly differently: MachineFunctionPass, RegionPass, LoopPass, etc.

When you are writing a pass, you write a class that subclasses a *Pass parent class. Doing that means you are expected to implement different virtual methods that will be called under specific circumstances - but basically you have three functions: doInitialization, runOn* and doFinalization. The first one and the last one are rarely used, but they can provide you a way to execute code once all the basic-blocks have been run through or prior. The runOn* function is important though: this is the function that is going to get called with an LLVM object you are free to walk-through (Analysis passes according to the LLVM nomenclature) or modify (Transformation passes) it. As I said above, the LLVM objects are basically Module/Function/BasicBlock instances. In case it is not that obvious, a Module (a .c file) is made of Functions, and a Function is made of BasicBlocks, and a BasicBlock is a set of Instructions. I also suggest you take a look at the HelloWorld pass from the LLVM wiki, it should give you another simple example to wrap your head around the concept of pass.

For today's use-case I have chosen to subclass BasicBlockPass because our analysis doesn't need anything else than a BasicBlock to work. This is the case because we are mainly interested to capture certain arguments passed to certain function calls. Here is what looks like a function call in the LLVM IR world:

%retval = call i32 @test(i32 %argc)

call i32 (i8*, ...)* @printf(i8* %msg, i32 12, i8 42) ; yields i32

%X = tail call i32 @foo() ; yields i32

%Y = tail call fastcc i32 @foo() ; yields i32

call void %foo(i8 97 signext)

%struct.A = type { i32, i8 }

%r = call %struct.A @foo() ; yields { i32, i8 }

%gr = extractvalue %struct.A %r, 0 ; yields i32

%gr1 = extractvalue %struct.A %r, 1 ; yields i8

%Z = call void @foo() noreturn ; indicates that %foo never returns normally

%ZZ = call zeroext i32 @bar() ; Return value is %zero extended

Every time AFLTokenCap::runOnBasicBlock is called, the LLVM mid-end will call into our analysis pass (either statically linked into clang/opt or will dynamically load it) with a BasicBlock passed by reference. From there, we can iterate through the set of instructions contained in the basic block and find the call instructions. Every instructions subclass the top level llvm::Instruction class - in order to filter you can use the dyn_cast<T> template function that works like the dynamic_cast<T> operator but does not rely on RTTI (and is more efficient - according to the LLVM coding standards). Used in conjunction with a range-based for loop on the BasicBlock object you can iterate through all the instructions you want.

bool AFLTokenCap::runOnBasicBlock(BasicBlock &B) {

for(auto &I_ : B) {

/* Handle calls to functions of interest */

if(CallInst *I = dyn_cast<CallInst>(&I_)) {

// [...]

}

}

}

Once we have found a llvm::CallInst instance, we need to:

- Get the name of the called function, assuming it is not an indirect target: llvm::CallInst::getCalledFunction

- Further the analysis only if only it is a function of interest:

strcmp,strncmp,strcasecmp,strncasecmp,memcmp - Extract the arguments passed to the function: llvm::CallInst::getNumArgOperands, llvm::CallInst::getArgOperand

- Detect hard-coded strings (we will consider a subset of them as tokens)

Not sure you have noticed yet, but all the objects we are playing with are not only subclassed from llvm::Instruction. You also have to deal with llvm::Value which is an even more top-level class (llvm::Instruction is a child of llvm::Value). But llvm::Value is also used to represent constants: think of hard-coded strings, integers, etc.

Detecting hard-coded strings

In order to detect hard-coded strings in the arguments passed to function calls, I decided to filter out the llvm::ConstantExpr. As its name suggests, this class handles "a constant value that is initialized with an expression using other constant values".

The end goal, is to find llvm::ConstantDataArrays and to retrieve their raw values - those will be the hard-coded strings we are looking for.

/home/over/workz/afl-2.35b/afl-clang-fast -c -W -Wall -O3 -funroll-loops -fPIC -o png.pic.o png.c

[...]

afl-llvm-tokencap-pass 2.35b by <0vercl0k@tuxfamily.org>

[...]

[+] Call to memcmp with constant "\x00\x00\xf6\xd6\x00\x01\x00\x00\x00\x00\xd3" found in png.c/png_icc_check_header

At this point, the pass basically does what the token capture library is able to do.

Harvesting integer immediate

After playing around with it on libpng though, I quickly was wondering why the pass would not extract all the constants I could find in one of the dictionary already generated and shipped with afl:

// png.dict

section_IDAT="IDAT"

section_IEND="IEND"

section_IHDR="IHDR"

section_PLTE="PLTE"

section_bKGD="bKGD"

section_cHRM="cHRM"

section_fRAc="fRAc"

section_gAMA="gAMA"

section_gIFg="gIFg"

section_gIFt="gIFt"

section_gIFx="gIFx"

section_hIST="hIST"

section_iCCP="iCCP"

section_iTXt="iTXt"

...

Some of those can be found in the function png_push_read_chunk in the file pngpread.c for example:

//png_push_read_chunk

#define png_IHDR PNG_U32( 73, 72, 68, 82)

// ...

if (chunk_name == png_IHDR)

{

if (png_ptr->push_length != 13)

png_error(png_ptr, "Invalid IHDR length");

PNG_PUSH_SAVE_BUFFER_IF_FULL

png_handle_IHDR(png_ptr, info_ptr, png_ptr->push_length);

}

else if (chunk_name == png_IEND)

{

PNG_PUSH_SAVE_BUFFER_IF_FULL

png_handle_IEND(png_ptr, info_ptr, png_ptr->push_length);

png_ptr->process_mode = PNG_READ_DONE_MODE;

png_push_have_end(png_ptr, info_ptr);

}

else if (chunk_name == png_PLTE)

{

PNG_PUSH_SAVE_BUFFER_IF_FULL

png_handle_PLTE(png_ptr, info_ptr, png_ptr->push_length);

}

In order to also grab those guys, I have decided to add the support for compare instructions with integer immediate (in one of the operand). Again, thanks to LLVM this is really easy to pull that off: we just need to find the llvm::ICmpInst instructions. The only thing to keep in mind is false positives. In order to lower the false positives rate, I have chosen to consider an integer immediate as a token only if only it is fully ASCII (like the libpng tokens above)

We can even push it a bit more, and handle switch statements via the same strategy. The only additional step is to retrieve every cases from in the switch statement: llvm::SwitchInst::cases.

/* Handle switch/case with integer immediates */

else if(SwitchInst *SI = dyn_cast<SwitchInst>(&I_)) {

for(auto &CIT : SI->cases()) {

ConstantInt *CI = CIT.getCaseValue();

dump_integer_token(CI);

}

}

Limitations

The main limitation is that as you are supposed to run the pass as part of the compilation process, it is most likely going to end-up compiling tests or utilities that the library ships with. Now, this is annoying as it may add some noise to your tokens - especially with utility programs. Those ones usually parse input arguments and some use strcmp like function with hard-coded strings to do their parsing.

A partial solution (as in, it reduces the noise, but does not remove it entirely) I have implemented is just to not process any functions called main. Most of the cases I have seen (the set of samples is pretty small I won't lie >:]), this argument parsing is made in the main function and it is very easy to not process it by blacklisting it as you can see below:

bool AFLTokenCap::runOnBasicBlock(BasicBlock &B) {

// [...]

Function *F = B.getParent();

m_FunctionName = F->hasName() ? F->getName().data() : "unknown";

if(strcmp(m_FunctionName, "main") == 0)

return false;

Another thing I wanted to experiment on, but did not, was to provide a regular expression like string (think "test/*") and not process every files/path that are matching it. You could easily blacklist a whole directory of tests with this.

Demo

I have not spent much time trying it out on a lot of code-bases (feel free to send me your feedbacks if you run it on yours though!), but here are some example results with various degree of success.. or not. Starting with libpng:

over@bubuntu:~/workz/lpng1625$ AFL_TOKEN_FILE=/tmp/png.dict make

cp scripts/pnglibconf.h.prebuilt pnglibconf.h

/home/over/workz/afl-2.35b/afl-clang-fast -c -I../zlib -W -Wall -O3 -funroll-loops -o png.o png.c

afl-clang-fast 2.35b by <lszekeres@google.com>

afl-llvm-tokencap-pass 2.35b by <0vercl0k@tuxfamily.org>

afl-llvm-pass 2.35b by <lszekeres@google.com>

[+] Instrumented 945 locations (non-hardened mode, ratio 100%).

[+] Found alphanum constant "acsp" in png.c/png_icc_check_header

[+] Call to memcmp with constant "\x00\x00\xf6\xd6\x00\x01\x00\x00\x00\x00\xd3" found in png.c/png_icc_check_header

[+] Found alphanum constant "RGB " in png.c/png_icc_check_header

[+] Found alphanum constant "GRAY" in png.c/png_icc_check_header

[+] Found alphanum constant "scnr" in png.c/png_icc_check_header

[+] Found alphanum constant "mntr" in png.c/png_icc_check_header

[+] Found alphanum constant "prtr" in png.c/png_icc_check_header

[+] Found alphanum constant "spac" in png.c/png_icc_check_header

[+] Found alphanum constant "abst" in png.c/png_icc_check_header

[+] Found alphanum constant "link" in png.c/png_icc_check_header

[+] Found alphanum constant "nmcl" in png.c/png_icc_check_header

[+] Found alphanum constant "XYZ " in png.c/png_icc_check_header

[+] Found alphanum constant "Lab " in png.c/png_icc_check_header

[...]

over@bubuntu:~/workz/lpng1625$ sort -u /tmp/png.dict

"abst"

"acsp"

"bKGD"

"cHRM"

"gAMA"

"GRAY"

"hIST"

"iCCP"

"IDAT"

"IEND"

"IHDR"

"iTXt"

"Lab "

"link"

"mntr"

"nmcl"

"oFFs"

"pCAL"

"pHYs"

"PLTE"

"prtr"

"RGB "

"sBIT"

"sCAL"

"scnr"

"spac"

"sPLT"

"sRGB"

"tEXt"

"tIME"

"tRNS"

"\x00\x00\xf6\xd6\x00\x01\x00\x00\x00\x00\xd3"

"XYZ "

"zTXt"

On sqlite3 (sqlite.dict):

over@bubuntu:~/workz/sqlite3$ AFL_TOKEN_FILE=/tmp/sqlite.dict [/home/over/workz/afl-2.35b/afl-clang-fast stub.c sqlite3.c -lpthread -ldl -o a.out

[...]

afl-llvm-tokencap-pass 2.35b by <0vercl0k@tuxfamily.org>

afl-llvm-pass 2.35b by <lszekeres@google.com>

[+] Instrumented 47546 locations (non-hardened mode, ratio 100%).

[+] Call to strcmp with constant "unix-excl" found in sqlite3.c/unixOpen

[+] Call to memcmp with constant "SQLite format 3" found in sqlite3.c/sqlite3BtreeBeginTrans

[+] Call to memcmp with constant "@ " found in sqlite3.c/sqlite3BtreeBeginTrans

[+] Call to strcmp with constant "BINARY" found in sqlite3.c/sqlite3_step

[+] Call to strcmp with constant ":memory:" found in sqlite3.c/sqlite3BtreeOpen

[+] Call to strcmp with constant "nolock" found in sqlite3.c/sqlite3BtreeOpen

[+] Call to strcmp with constant "immutable" found in sqlite3.c/sqlite3BtreeOpen

[+] Call to memcmp with constant "\xd9\xd5\x05\xf9 \xa1c" found in sqlite3.c/syncJournal

[+] Found alphanum constant "char" in sqlite3.c/yy_reduce

[+] Found alphanum constant "clob" in sqlite3.c/yy_reduce

[+] Found alphanum constant "text" in sqlite3.c/yy_reduce

[+] Found alphanum constant "blob" in sqlite3.c/yy_reduce

[+] Found alphanum constant "real" in sqlite3.c/yy_reduce

[+] Found alphanum constant "floa" in sqlite3.c/yy_reduce

[+] Found alphanum constant "doub" in sqlite3.c/yy_reduce

[+] Call to strcmp with constant "sqlite_sequence" found in sqlite3.c/sqlite3StartTable

[+] Call to memcmp with constant "file:" found in sqlite3.c/sqlite3ParseUri

[+] Call to memcmp with constant "localhost" found in sqlite3.c/sqlite3ParseUri

[+] Call to memcmp with constant "vfs" found in sqlite3.c/sqlite3ParseUri

[+] Call to memcmp with constant "cache" found in sqlite3.c/sqlite3ParseUri

[+] Call to memcmp with constant "mode" found in sqlite3.c/sqlite3ParseUri

[+] Call to strcmp with constant "localtime" found in sqlite3.c/isDate

[+] Call to strcmp with constant "unixepoch" found in sqlite3.c/isDate

[+] Call to strncmp with constant "weekday " found in sqlite3.c/isDate

[+] Call to strncmp with constant "start of " found in sqlite3.c/isDate

[+] Call to strcmp with constant "month" found in sqlite3.c/isDate

[+] Call to strcmp with constant "year" found in sqlite3.c/isDate

[+] Call to strcmp with constant "hour" found in sqlite3.c/isDate

[+] Call to strcmp with constant "minute" found in sqlite3.c/isDate

[+] Call to strcmp with constant "second" found in sqlite3.c/isDate

over@bubuntu:~/workz/sqlite3$ sort -u /tmp/sqlite.dict

"@ "

"BINARY"

"blob"

"cache"

"char"

"clob"

"doub"

"file:"

"floa"

"hour"

"immutable"

"localhost"

"localtime"

":memory:"

"minute"

"mode"

"month"

"nolock"

"real"

"second"

"SQLite format 3"

"sqlite_sequence"

"start of "

"text"

"unixepoch"

"unix-excl"

"vfs"

"weekday "

"\xd9\xd5\x05\xf9 \xa1c"

"year"

On libxml2 (here is a library with a lot of test cases / utilities that raises the noise ratio in the tokens extracted - cf xmlShell* for example):

over@bubuntu:~/workz/libxml2$ CC=/home/over/workz/afl-2.35b/afl-clang-fast ./autogen.sh && AFL_TOKEN_FILE=/tmp/xml.dict make

[...]

afl-clang-fast 2.35b by <lszekeres@google.com>

afl-llvm-tokencap-pass 2.35b by <0vercl0k@tuxfamily.org>

afl-llvm-pass 2.35b by <lszekeres@google.com>

[+] Instrumented 668 locations (non-hardened mode, ratio 100%).

[+] Call to strcmp with constant "UTF-8" found in encoding.c/xmlParseCharEncoding__internal_alias

[+] Call to strcmp with constant "UTF8" found in encoding.c/xmlParseCharEncoding__internal_alias

[+] Call to strcmp with constant "UTF-16" found in encoding.c/xmlParseCharEncoding__internal_alias

[+] Call to strcmp with constant "UTF16" found in encoding.c/xmlParseCharEncoding__internal_alias

[+] Call to strcmp with constant "ISO-10646-UCS-2" found in encoding.c/xmlParseCharEncoding__internal_alias

[+] Call to strcmp with constant "UCS-2" found in encoding.c/xmlParseCharEncoding__internal_alias

[+] Call to strcmp with constant "UCS2" found in encoding.c/xmlParseCharEncoding__internal_alias

[+] Call to strcmp with constant "ISO-10646-UCS-4" found in encoding.c/xmlParseCharEncoding__internal_alias

[+] Call to strcmp with constant "UCS-4" found in encoding.c/xmlParseCharEncoding__internal_alias

[+] Call to strcmp with constant "UCS4" found in encoding.c/xmlParseCharEncoding__internal_alias

[+] Call to strcmp with constant "ISO-8859-1" found in encoding.c/xmlParseCharEncoding__internal_alias

[+] Call to strcmp with constant "ISO-LATIN-1" found in encoding.c/xmlParseCharEncoding__internal_alias

[+] Call to strcmp with constant "ISO LATIN 1" found in encoding.c/xmlParseCharEncoding__internal_alias

[+] Call to strcmp with constant "ISO-8859-2" found in encoding.c/xmlParseCharEncoding__internal_alias

[+] Call to strcmp with constant "ISO-LATIN-2" found in encoding.c/xmlParseCharEncoding__internal_alias

[+] Call to strcmp with constant "ISO LATIN 2" found in encoding.c/xmlParseCharEncoding__internal_alias

[+] Call to strcmp with constant "ISO-8859-3" found in encoding.c/xmlParseCharEncoding__internal_alias

[+] Call to strcmp with constant "ISO-8859-4" found in encoding.c/xmlParseCharEncoding__internal_alias

[+] Call to strcmp with constant "ISO-8859-5" found in encoding.c/xmlParseCharEncoding__internal_alias

[+] Call to strcmp with constant "ISO-8859-6" found in encoding.c/xmlParseCharEncoding__internal_alias

[+] Call to strcmp with constant "ISO-8859-7" found in encoding.c/xmlParseCharEncoding__internal_alias

[+] Call to strcmp with constant "ISO-8859-8" found in encoding.c/xmlParseCharEncoding__internal_alias

[+] Call to strcmp with constant "ISO-8859-9" found in encoding.c/xmlParseCharEncoding__internal_alias

[+] Call to strcmp with constant "ISO-2022-JP" found in encoding.c/xmlParseCharEncoding__internal_alias

[+] Call to strcmp with constant "SHIFT_JIS" found in encoding.c/xmlParseCharEncoding__internal_alias

[+] Call to strcmp with constant "EUC-JP" found in encoding.c/xmlParseCharEncoding__internal_alias

[...]

afl-clang-fast 2.35b by <lszekeres@google.com>

afl-llvm-tokencap-pass 2.35b by <0vercl0k@tuxfamily.org>

afl-llvm-pass 2.35b by <lszekeres@google.com>

[+] Instrumented 1214 locations (non-hardened mode, ratio 100%).

[+] Call to strcmp with constant "exit" found in debugXML.c/xmlShell__internal_alias

[+] Call to strcmp with constant "quit" found in debugXML.c/xmlShell__internal_alias

[+] Call to strcmp with constant "help" found in debugXML.c/xmlShell__internal_alias

[+] Call to strcmp with constant "validate" found in debugXML.c/xmlShell__internal_alias

[+] Call to strcmp with constant "load" found in debugXML.c/xmlShell__internal_alias

[+] Call to strcmp with constant "relaxng" found in debugXML.c/xmlShell__internal_alias

[+] Call to strcmp with constant "save" found in debugXML.c/xmlShell__internal_alias

[+] Call to strcmp with constant "write" found in debugXML.c/xmlShell__internal_alias

[+] Call to strcmp with constant "grep" found in debugXML.c/xmlShell__internal_alias

[+] Call to strcmp with constant "free" found in debugXML.c/xmlShell__internal_alias

[+] Call to strcmp with constant "base" found in debugXML.c/xmlShell__internal_alias

[+] Call to strcmp with constant "setns" found in debugXML.c/xmlShell__internal_alias

[+] Call to strcmp with constant "setrootns" found in debugXML.c/xmlShell__internal_alias

[+] Call to strcmp with constant "xpath" found in debugXML.c/xmlShell__internal_alias

[+] Call to strcmp with constant "setbase" found in debugXML.c/xmlShell__internal_alias

[+] Call to strcmp with constant "whereis" found in debugXML.c/xmlShell__internal_alias

[...]

over@bubuntu:~/workz/libxml2$ sort -u /tmp/xml.dict

"307377"

"base"

"c14n"

"catalog"

"<![CDATA["

"chvalid"

"crazy:"

"debugXML"

"dict"

"disable SAX"

"document"

"encoding"

"entities"

"EUC-JP"

"exit"

"fetch external entities"

"file:///etc/xml/catalog"

"free"

"ftp://"

"gather line info"

"grep"

"hash"

"help"

"HTMLparser"

"HTMLtree"

"http"

"HTTP/"

"huge:"

"huge:attrNode"

"huge:commentNode"

"huge:piNode"

"huge:textNode"

"is html"

"ISO-10646-UCS-2"

"ISO-10646-UCS-4"

"ISO-2022-JP"

"ISO-8859-1"

"ISO-8859-2"

"ISO-8859-3"

"ISO-8859-4"

"ISO-8859-5"

"ISO-8859-6"

"ISO-8859-7"

"ISO-8859-8"

"ISO-8859-9"

"ISO LATIN 1"

"ISO-LATIN-1"

"ISO LATIN 2"

"ISO-LATIN-2"

"is standalone"

"is valid"

"is well formed"

"keep blanks"

"list"

"load"

"nanoftp"

"nanohttp"

"parser"

"parserInternals"

"pattern"

"quit"

"relaxng"

"save"

"SAX2"

"SAX block"

"SAX function attributeDecl"

"SAX function cdataBlock"

"SAX function characters"

"SAX function comment"

"SAX function elementDecl"

"SAX function endDocument"

"SAX function endElement"

"SAX function entityDecl"

"SAX function error"

"SAX function externalSubset"

"SAX function fatalError"

"SAX function getEntity"

"SAX function getParameterEntity"

"SAX function hasExternalSubset"

"SAX function hasInternalSubset"

"SAX function ignorableWhitespace"

"SAX function internalSubset"

"SAX function isStandalone"

"SAX function notationDecl"

"SAX function reference"

"SAX function resolveEntity"

"SAX function setDocumentLocator"

"SAX function startDocument"

"SAX function startElement"

"SAX function unparsedEntityDecl"

"SAX function warning"

"schemasInternals"

"schematron"

"setbase"

"setns"

"setrootns"

"SHIFT_JIS"

"sql:"

"substitute entities"

"test/threads/invalid.xml"

"total"

"tree"

"tutor10_1"

"tutor10_2"

"tutor3_2"

"tutor8_2"

"UCS-2"

"UCS2"

"UCS-4"

"UCS4"

"user data"

"UTF-16"

"UTF16"

"UTF-16BE"

"UTF-16LE"

"UTF-8"

"UTF8"

"valid"

"validate"

"whereis"

"write"

"xinclude"

"xmlautomata"

"xmlerror"

"xmlIO"

"xmlmodule"

"xmlreader"

"xmlregexp"

"xmlsave"

"xmlschemas"

"xmlschemastypes"

"xmlstring"

"xmlunicode"

"xmlwriter"

"xpath"

"xpathInternals"

"xpointer"

Performance wise - here is what we are looking at on libpng (+0.283s):

over@bubuntu:~/workz/lpng1625$ make clean && time AFL_TOKEN_FILE=/tmp/png.dict make && make clean && time make

[...]

real 0m12.320s

user 0m11.732s

sys 0m0.360s

[...]

real 0m12.037s

user 0m11.436s

sys 0m0.384s

Last words

I am very interested in hearing from you if you give a shot to this analysis pass on your code-base and / or your fuzzing sessions, so feel free to hit me up! Also, note that libfuzzer supports the same feature and is compatible with afl's dictionary syntax - so you get it for free!

Here is a list of interesting articles talking about transformation/analysis passes that I recommend you read if you want to know more:

- Turning Regular Code Into Atrocities With LLVM

- llvm-functionpass-kryptonite-obfuscater.cpp

- quarkslab/llvm-passes

- llvm/lib/Analysis

- llvm/lib/Transforms

Special shout-outs to my proofreaders: yrp, mongo & jonathan.

Go hax clang and or LLVM!